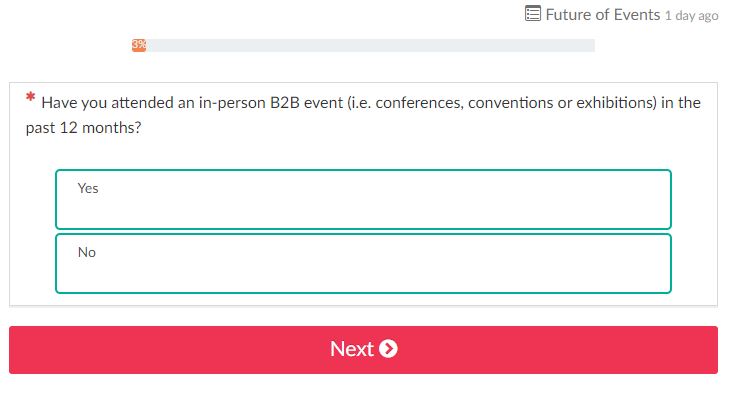

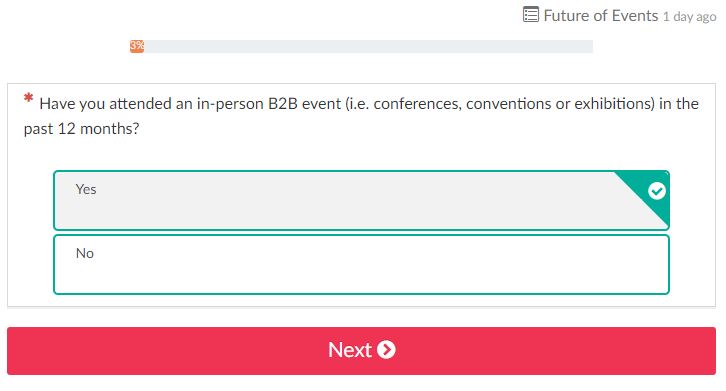

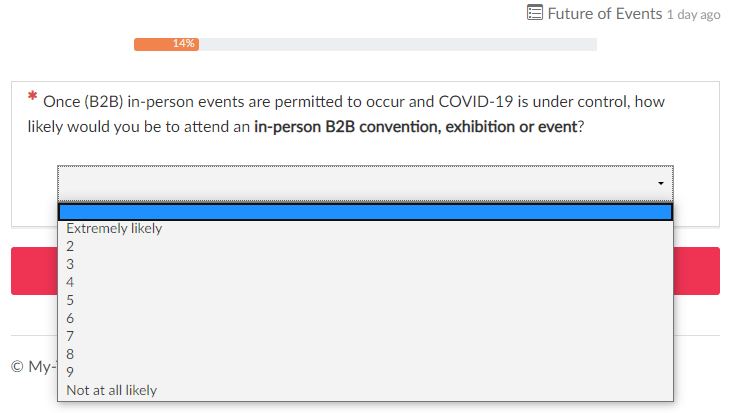

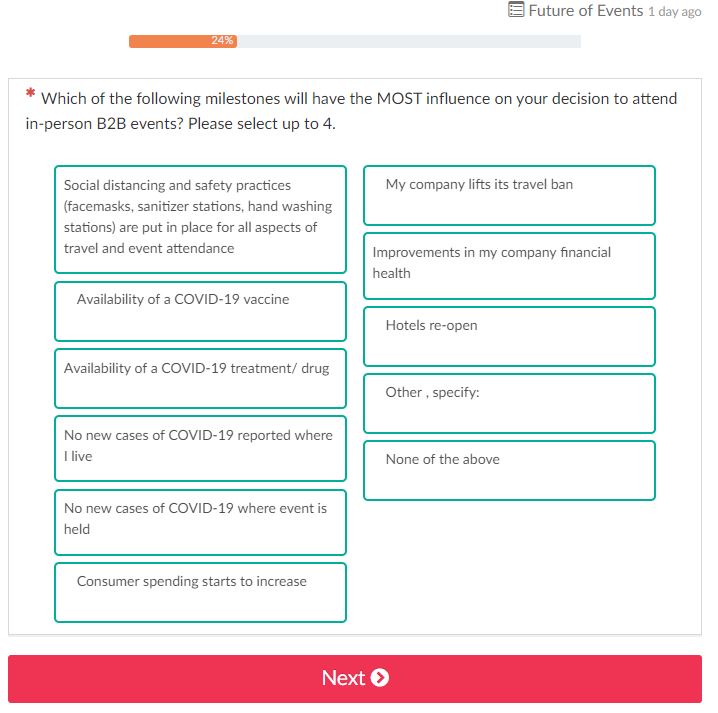

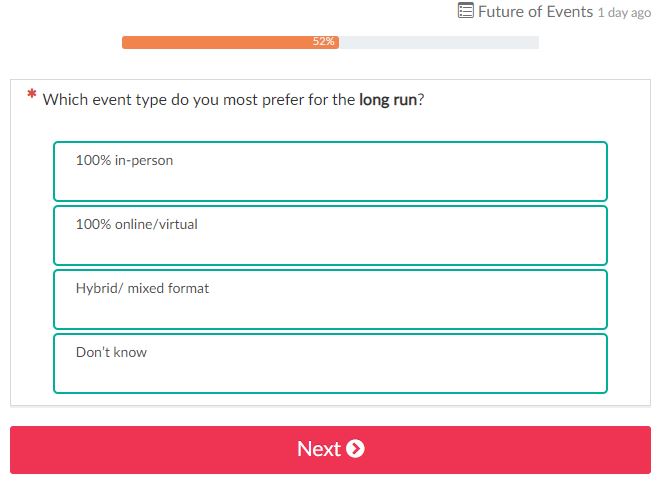

Julian Abich, Ph.D., Senior Human Factors Engineer Julian Abich, Ph.D., Senior Human Factors Engineer I'll start this out like every blog post in 2020…COVID-19 sucks and has thrown a wrench into everything! From a professional perspective, it has affected us all in different ways, but overall it has forced us to rethink the way we conduct our work. As human factors|UX/UI researchers, this has greatly limited our ability to conduct live field research with human participants. Because of this, methodologies to collect data right now are focused heavily on online or remote approaches. Specifically, web-based surveys may be flooding your inbox. The general use of surveys have been around for quite some time, at a minimum of over a century within the U.S. (Converse, 1987). Web-based methods have been in use since the early days of the internet. Some state it was one of the most significant advances in survey technology within the 20th century (Dillman, 2000). "With great power comes great responsibility" (I'll attribute this quote to the late and great Stan Lee). Okay, so survey writing may not be a superpower, but it is a powerful tool for collecting quantitative and qualitative data when implemented correctly. Actually, if I can understand a person's behavioral patterns based on collected data, then that kind of gives me the power to predict the future. I guess I do have superpowers!  Despite the longevity and broad applications of surveys, I constantly come across poorly designed online surveys and it hurts my human factors brain. This is likely because anyone can create a survey if they want, especially web-based, but this doesn't mean you should. So what should you do? Work with professionals who are trained to extract valuable information from participants or respondents. It may seem like an easy task to do on your own, but being able to generate a valid and effective survey is as much of an art as it is a science. You don't become a great scientist or artist overnight, it takes time and experience to hone those skills.  Think about it this way, if you're planning to use the data collected from a survey to drive decisions about a product design, an event, or whatever, don't you think it's important to gather the most informative data possible? Especially if these products or events cost hundreds of thousands to millions of dollars to develop. I'll answer for you…YES! So why are there still so many issues with online surveys? I say it's probably because people don't know what they don't know. They aren't aware or trained how to create appropriately worded questions, or organize the flow, or understand the factors that might influence a person's response to a question. And therefore, they end up drawing conclusions that don't accurately reflect respondents' views, behaviors, or beliefs. The end result may be a poorly designed product that no one wants to use. As I am writing this I received another request to fill out a survey. This one is to get feedback on future audiovisual events and gain insight on future needs to help produce in-person and virtual events. Let's see how well this survey is designed or if there are ways it can be improved. Target the right audience. The email was sent to me because I attended a related conference last year. So far so good. Randomly sampling the population is another way to collect survey data and is what makes web-based surveys so attractive, but sampling techniques should be dependent on the context of the survey. Always spell out acronyms. Not all respondents are going to know what the acronyms are so it's best to make sure they are always spelled out, at least the first time they are used. Below is an example of the first question that is asked. Besides the question, do you notice any other potential issues with the format or design of the form? (Don't ask me what "Future of Events 1 day ago" means because I have no idea) This is what the form looks like when you choose an answer. See any other issues with how it's designed? Maybe a color selection issue? Rating scales should align with the questions and be consistent. First, the scale should make sense when associated with a question. When Likert-type scales are used to gather responses, the lower end of the scale usually refers to less agreement, frequency, importance, likelihood, or quality. Do you see an issue with the scale below? Second, anchor labels could be used to show the extreme ends of the scale, but should still have an associated value (although it's best to have labels and values for every level of the scale to avoid confusion). Third, a label for the center of the scale should be provided because the middle may be assumed to be a neutral response. Fourth, the scales should be consistent as much as possible throughout the survey. Meaning, if you're using a 10-point scale, the don't make the next question a five- or seven-point as seen in the images below. This just makes answering questions more challenging for the respondents. Avoid double barreled questions. The questions above are also designed poorly because there are multiple items in the questions that the respondents might not agree with. Asking the respondents if they prefer "workshops, panels, and training" to be online is taking away the ability for respondents to agree with one of those only. It may seem tedious, but these types of questions need to be reworded or broken out into separate questions. Avoid leading and loaded questions. Do not force respondents to provide answers that don't truly reflect their sentiment. Below it asks respondents to select up to four, but what if respondents only agreed with one or two items? Now you're forcing respondents to provide biased answers that don't truly reflect how they feel or would behave. See a problem with this? Rephrasing the question to say they could choose up to four is different than saying they have to choose them. Make sure the question wording is clear and well defined. Don't leave the wording of questions open for interpretation (unless that is intentional). Every included word should be chosen with a clear purpose. If you asked different respondents what "long run" meant, you would probably receive many different answers. These are just some examples to highlight the challenges with creating a valid survey, although there are many other issues that can arise. It may not be obvious until you sit down to analyze the data that you have a complex data set and it's difficult (or impossible) to generate appropriate conclusions for decision-making. Don't waste your time and resources collecting worthless data when you can do it correctly the first time. You'll be happy, your boss will be happy, your customers will be happy, and the respondents will be happy to know their opinions matter. Contact us if you have any questions (no pun intended) or need support with your data collection efforts. References:

Converse, J. M. (1987). Survey research in the United States: Roots and emergence, 1890–1960. Berkeley, CA: University of California Press. Dillman, D. A. (2000). Mail and Internet surveys--The tailored design method. New York : John Wiley & Sons, Inc.

0 Comments

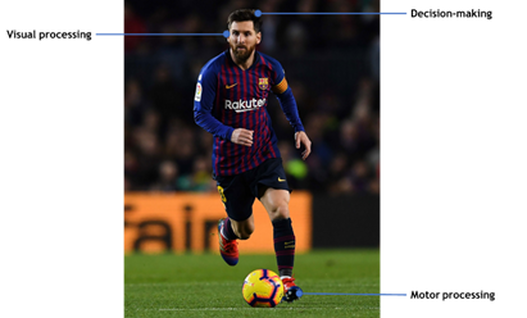

CJ Montalbano, Human Factors Engineer CJ Montalbano, Human Factors Engineer The Potential for Change Did you know that with regular practice of activities aimed at training the brain, it is possible to improve working memory (Klingberg, 2010), visual processing (Willis et al., 2006), control of attentional resources (Burge et al., 2013), and stress-response resiliency (Witt, 1980)? Brain training or cognitive training (CT), is typically used in medical settings for improving cognitive functioning in traumatic brain injury patients for example. However, CT is being used in other settings, such as sports. Sports Performance is Cognitively Demanding Playing any sport requires a high demand of cognitive functioning including, but not limited to, decision making, working memory, visual and perceptual processing, motor functioning, and divided attention. In moments of normal gameplay, the amount of brain processing needed to evaluate, act, and perform optimally for every possible situation is astronomical. Moreover, players frequently encounter high-pressure situations where stress-response regulation can be crucial to performance outcomes (Eysenck and Wilson, 2016). With this in mind (no pun intended), take a moment and try to place yourself inside the head of a competitive soccer player; say Lionel Messi for example. At all moments in time on the field, Messi must be aware of his positioning relative to the current and projected state of the ball, his teammates, and the position of his opponents. This is only the start of the mental juggling act needed to be an effective player. Of course, Messi must also act on these thought-processes repeatedly, which requires precise split-second decision-making. In fact, one judgment error or slight hesitation can be a catalyst to a domino effect of miscalculations likely leading to an opponent goal or missed offensive opportunity. So, when it comes to training, the traditional practice paradigm may be enough to improve a player’s physical performance and technical skills, but there’s clearly a mental facet to the game that needs to be considered. Current CT Applications in the Sports Field There are several companies producing commercial CT solutions aimed at specifically improving sports performance, such as Axon Sports, FITLIGHT, and NeuroTracker, to name a few. Interestingly, the utilization of CT in the professional sports realm is very present. For instance, NeuroTracker, has an extensive clientele list with teams from the NFL and Premier league bought in, with research supporting their efficacy. One such study used a 3-dimensional multiple object tracking (3D-MOT) task which required participants to track and recall multiple moving objects in a changing visual field (Romeas, Guldner, and Faubert, 2016). The concept behind this task is that it may actually simulate the cognitive processing occurring in Messi’s head when he’s evaluating his surroundings on the field. In the study, the effects of training for 19 male soccer players were examined across the three groups (3D-MOT, passive, and active control). The 3D-MOT group trained twice per week for 5 weeks, whereas the active control watched 3D soccer videos and partook in engaging interviews, and the passive control received no treatment for the evaluations. Interestingly, the 3D-MOT trained group improved by 15% in a measure of on-field passing decision-making. However, there were no improvements in shooting or passing accuracy, which underscores the potential lack of transfer effect to similar yet different tasks (Walton et al., 2018). Moreover, another study found that while participants improved significantly in the training task post-training, no evidence was found for near transfer (to another object tracking task) or for a far transfer task (a driving task that required recalling specific locations) (Harris et al., 2020). This concept of transferring training to the specific performance task is a major talking-point in terms of the efficacy of CT applications. Transfer of training refers to the generalization of skills attained from training which are then applied to different tasks and domains. One way transfer tasks are categorized is by how near or far they are from the training task, in other words, the closer the transfer task resembles the training task the nearer the transfer. Unsurprisingly, the likelihood of transfer effects is directly related to the degree to which the transfer task resembles the training task, thus near transfer is much more commonly seen (Sala et al., 2019). Sport-based CT is no exception to this phenomenon. The far transfer of CT on sports performance is especially difficult given that sports are highly variable with many factors that ultimately contribute to the success or demise of a player (e.g., natural performance variances, nutrition, emotional state, sleep deprivation, etc.) (Walton et al., 2018). For example, measuring win/loss ratios (e.g., season performance) may be an appealing metric for CT transfer, but again there are so many factors that play into a team's success it’s difficult to single out CT as the affective variable. What Do You Think? Put yourself in the mind of a coach (yes, I’m sorry, you are officially no longer Messi). Now, consider the following: Would you pay for a CT product that may or may not transfer successfully to the pitch? If it were me, I just might, as it’s a pretty neat concept. That said, I think it’s clear that researchers in the field of CT and developers of these systems should continue to make collaborative efforts to better understand and evaluate the transfer effects of training tasks to real-world performance outcomes. Eventually, with the advent of innovative training designs and accurate ways to determine their effectiveness, I believe CT will not only hold its own in the sports realm, but also in a variety of other settings. Sources

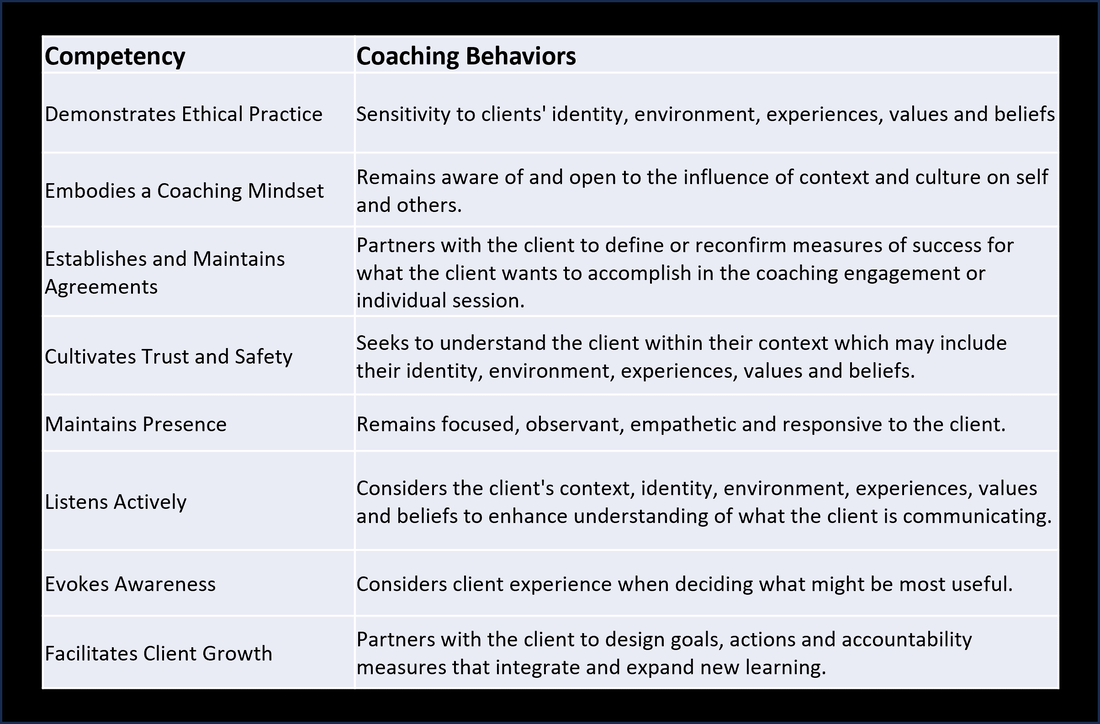

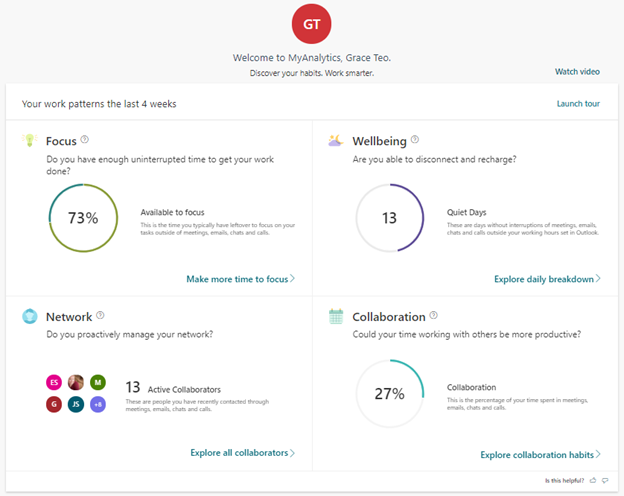

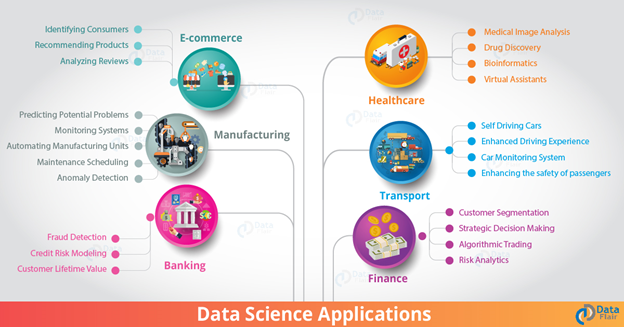

Burge, W. K., Ross, L. A., Amthor, F. R., Mitchell, W. G., Zotov, A., & Visscher, K. M. (2013). Processing speed training increases the efficiency of attentional resource allocation in young adults. Frontiers in Human Neuroscience, 7, 684. De Witt, D. J. (1980). Cognitive and biofeedback training for stress reduction with university athletes. Journal of Sport and Exercise Psychology, 2(4), 288-294. Eysenck, M. W., & Wilson, M. R. (2016). Sporting performance, pressure and cognition 14. An introduction to applied cognitive psychology. Harris, D. J., Wilson, M. R., Smith, S. J., Meder, N., & Vine, S. J. (2020). Testing the Effects of 3D Multiple Object Tracking Training on Near, Mid and Far Transfer. Frontiers in Psychology, 11, 196. Klingberg, T. (2010). Training and plasticity of working memory. Trends in cognitive sciences, 14(7), 317-324. Perceptual-Cognitive Training Solution. (2020, March 27). Retrieved June 26, 2020, from https://neurotracker.net/ Ping, J., Liu, Y., & Weng, D. (2019, March). Comparison in depth perception between Virtual Reality and Augmented Reality systems. In 2019 IEEE Conference on Virtual Reality and 3D User Interfaces (VR) (pp. 1124-1125). IEEE. Romeas, T., Guldner, A., & Faubert, J. (2016). 3D-Multiple Object Tracking training task improves passing decision-making accuracy in soccer players. Psychology of Sport and Exercise, 22, 1-9. Sala, G., Aksayli, N. D., Tatlidil, K. S., Tatsumi, T., Gondo, Y., & Gobet, F. (2019). Near and far transfer in cognitive training: A second-order meta-analysis. Collabra: Psychology, 5(1). Simons, D. J., Boot, W. R., Charness, N., Gathercole, S. E., Chabris, C. F., Hambrick, D. Z., & Stine-Morrow, E. A. (2016). Do “brain-training” programs work?. Psychological Science in the Public Interest, 17(3), 103-186. Walton, C. C., Keegan, R. J., Martin, M., & Hallock, H. (2018). The potential role for cognitive training in sport: more research needed. Frontiers in psychology, 9, 1121. Willis, S. L., Tennstedt, S. L., Marsiske, M., Ball, K., Elias, J., Koepke, K. M., ... & Wright, E. (2006). Long-term effects of cognitive training on everyday functional outcomes in older adults. Jama, 296(23), 2805-2814.  Dr. Grace Teo, Sr. Research Psychologist Dr. Grace Teo, Sr. Research Psychologist Although no one from the office directly observed me doing work during the COVID quarantine, someone else was keeping watch. They noted and reported back to me how much time I spent in “deep focus”, on emails and online chats, meeting duration and if these meetings were accompanied by agendas, who my collaborators were, and my level of well-being (i.e., the time I actually “powered down” from work). Yes, Microsoft’s MyAnalytics (see Fig. 1) was my productivity pal while I worked from home. I indulged myself in this new distraction, reading my stats and delving into the research and insights, discovering things like “it can take up to 23 minutes to refocus after checking just one email or chat”, “having long blocks of time to focus without interruptions can help you get challenging work done faster”, and “last-minute invitations are sometimes necessary, but your meetings may be more effective if you give attendees sufficient time to prepare”. Applications of data science methods are becoming prevalent in more and more domains of our work and life. Microsoft’s MyAnalytics is just one of many applications (see Fig. 2) that we encounter, whether we know it or not. A 2018 Forbes article provided a quick overview of some data science tools, which include: (i) data analytics, (ii) predictive modeling, (iii) artificial intelligence and machine learning (Forbes, 2018). As it turns out, these tools closely mirror much of what we do in our everyday interactions with others.

Data analytics merely involves examining and describing past data, such as past usage and activities. There is no prediction or extrapolation done with the data, as is the current state of MyAnalytics. Human analogy: We perform data analytics when we take a matter-of-fact approach in noting someone’s past behaviors, like when someone was late for all of the Thursday meetings in the past month. We do not use it to infer anything about their patterns of behavior or personality, and do not try to project their future actions. Predictive analytics takes things a step further. This involves using past data to derive some general pattern or model, which is then used to predict future events. Regression is a common statistical technique for developing such models from past or observed data. Human analogy: We perform predictive analytics to make sense of the world through patterns. For instance, when we see someone being late for a particular Thursday meeting more than a few times, we start to expect/predict that this person will be late for future meetings. In doing this, we create a “model” of the person’s behavior for meetings. Artificial Intelligence and Machine Learning (AI/ML) goes even further. In addition to using past data to predict future events, AI/ML involves autonomously learning and adjusting the model without any human intervention as new data comes in. This is what enables Facebook to get better at recognizing your friends by continually learning their unique features from each new picture of them in various poses and lighting conditions. Human analogy: This type of learning comes closest to what humans actually do with new information. Our understanding and predictions about someone get better the more we see him/her in various contexts and roles. Our knowledge of this person gets richer with each new encounter. For instance, after seeing the same person in other meetings on Thursday, non-work meetings, and meetings with other customers, we realize that s/he is only late for the Thursday meetings that occur with a particular customer. So what do you think of apps such as MyAnalytics? Do you think it would help your productivity? What aspects of your life would you not welcome the use of such apps? Leave a comment and tell us what you think!  Eric Sikorski, Ph.D., Director of Programs & Research Eric Sikorski, Ph.D., Director of Programs & Research Whether a fan or not, you are likely aware that the sports world came to a grinding halt in March due to COVID-19. No sports played means no new sports on TV. So, from football to futbal, foot races to auto races, until recently fans have been subjected entirely to sports reruns. At first, I was critical of the concept until I spent a solid two hours watching the 2019 World Track and Field Championships. So why would anyone, including me, watch sports reruns when the outcome has already been realized? George Costanza’s explanation of “because it’s on TV” was not quite satisfactory so I decided to inquire further. The eight types of motives for watching sports, from a validation study of the Sport Fan Motivation Scale (Wann, 1995), provide an interesting framework for answering the question. There are two motives that can be eliminated immediately. Eustress, which is basically positive stress, seems unlikely with the game’s outcome known. Economic factors, defined by Wann (1995) as economic gain such as through gambling, are not applicable. If you are betting on past sporting events, you, or the person taking your bet, may have bigger issues. Sporting events, and even replays of them, can serve as a temporary escape, taking one’s mind off everyday life. This may be especially welcome during extremely difficult times, like a global pandemic. In terms of entertainment, similar to watching a movie you have seen before, a foregone match still has entertaining elements beyond outcome suspense. Along these lines, people may also watch sports for aesthetic reasons, seeing the event as a form of art (Wann, 1995). Like hanging a favorite painting in your home, watching sports reruns is a way to revisit that beauty and creativity… sort of. Self-esteem benefits are realized when “your” team succeeds, along with a sense of identification and belongingness from being a fan of that team (Wann, 1995). Seeing your team succeed again, even while knowing it’s a rerun, may serve as a reminder of the team’s greatness and your affiliation with them. This is similar to the motivation of nostalgia in television program rerun viewing (Furno-Lamude & Anderson, 1992). Of course, affiliation needs can also be met through camaraderie with fellow fans, though I doubt texting your buddy about the Nats’ World Series replay represents the same shared experience as when they were actually “finish(ing) the fight”. Finally, watching sports reruns together may fulfill family needs but likely not to the same extent of live sports with the family rallying around the cause of cheering for their team. If, like me, you were wondering why someone would watch sports reruns, hopefully this helps address your curiosity. If you are looking for something to watch, I hear the NHL Network is replaying the Buffalo Sabres vs. Dallas Stars Stanley Cup Finals Game 6. Maybe this time the officials will make the right “no goal” call and the outcome will be different, though I wouldn’t bet on it.

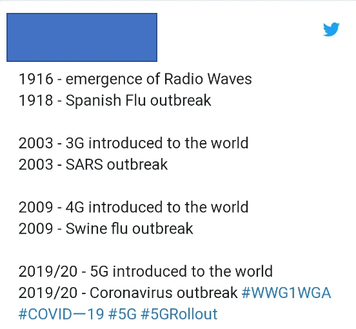

Michael King, Ph.D., Human Factors Engineer Michael King, Ph.D., Human Factors Engineer Michael King is a Human Factors Engineer at Quantum Improvements Consulting. He has 3 years of experience managing a research lab in a university setting, focusing on the relationship between cognition and human skill and performance. Michael earned his Ph.D. in Experimental Psychology at Case Western Reserve University, where he researched the cognitive and perceptual factors that influence human performance, such as memory, attention, and learning. Michael’s research interests center around human performance, training, and the predictors of success in Defense settings.  Grace Teo, Ph.D. - Senior Research Psychologist Grace Teo, Ph.D. - Senior Research Psychologist Dr. Grace Teo is a Senior Research Psychologist at Quantum Improvements Consulting. Grace earned her Ph.D. in Applied Experimental and Human Factors Psychology, and certificates in SAS Data Mining and Design for Usability from the University of Central Florida. She has work experience in both Industrial & Organizational (I-O.) and Human Factors (HF) psychology. Her I-O psychology work included developing competency profiles and selection assessment methodologies for scholarship and job applicants for various positions in the Singapore Civil Service and designing organizational surveys and leadership research. Grace’s HF psychology experience involves the use of knowledge elicitation techniques, as well as subjective and physiological measures to analyze and assess. She is keen to use both theory-driven and data-inspired approaches to understand and improve human performance under various conditions and in different contexts such as working with different technologies, and in teams. Grace’s dissertation was on enhancing performance in a human robot team by managing workload through a closed-loop system. The research involves developing a workload model that is based on physiological workload measures. Her research interests include decision making processes and measures, vigilance performance, human-robot teaming, automation, and individual differences. Grace has presented her work at several conferences including the HFES, AHFE, APA, HCII, ESV conferences, and is published in peer-reviewed journals.  Jacquelyn Schreck - Human Factors Engineer - Intern Jacquelyn Schreck - Human Factors Engineer - Intern With major events and/or crises there often comes misinformation, fake news, and conspiracy theories. No matter how outrageous, such as 9/11 being an inside job or lizard people infiltrating the government and the entertainment industry by holding high power positions, there are people who will buy-in. Unfortunately, the COVID-19 global health crisis is no exception when it comes to misinformation and conspiracy theories. In March 2020, a poll conducted by the Pew Research Center was taken by American adults asking how they believed the virus started. Out of 9,000 adults, only 43% believed it was natural (i.e., not man-made), 23% believed it was intentionally man-made, 6% believed it was man-made but unintentional, and 1% didn’t believe the Coronavirus existed at all (that’s 90 people!). There was no information on the remaining 27%, but it’s likely that this indicates the amount of people who didn’t respond. Regardless, based on these results, it appears that people are not only misinformed but uninformed as well. Currently, there is no remedy or cure for COVID-19 (no, drinking cleaning supplies, such as bleach, is NOT one), but there is a remedy for misinformation – critical thinking. According to the Foundation for Critical Thinking , it is a “mode of thinking - about any subject, content, or problem - in which the thinker improves the quality of his or her thinking by skillfully taking charge of the structures inherent in thinking and imposing intellectual standards upon them.” Please consider applying intellectual standards when evaluating information related to COVID- 19. Though there are multiple COVID-19 conspiracies, the most prolific conspiracy is that the roll-out of 5G signals impacted the Coronavirus. Some theories are that it weakens the immune system making the virus harder to fight off, it’s the government using the lock-down to install networks, and my personal favorite, it’s an Illuminati mass-murder plot. “Experts” have made claims about 5G as well. For example, according to a physician in California, the Coronavirus is the effect of poison from 5G. Another expert offered the counterargument that 5G waves are too similar to already-existing electromagnetic waves to be the cause of a pandemic. Of course, we cannot ignore the fact that electromagnetic waves are not viruses – science. In cases like these, it is necessary to scrutinize the level of expertise and the information presented. Always seek multiple sources of information, consider the basis of the information provided, the motivation of the person, and the benefits they would gain from your compliance. It is also important to remember that correlation does not equal causation. In the image below, a Twitter user is trying to make an argument that new advancements in radio waves precedes major pandemics. People are increasingly spending time on, and getting their news from, social media. While often criticized for spreading false information, social media has demonstrated awareness of false information about COVID-19 and is working on finding ways to combat it. TikTok, for instance, has partnered with the World Health Organization (WHO) to help disperse accurate and helpful tips to its users. One TikTok from WHO shows how to properly use a facemask. TikTok isn’t the only media source combatting misinformation. Facebook, YouTube, Twitter, and more have been called out about the spreading of fake news and the lack of moderation on their platforms and have since made claims explaining how they have taken action. Facebook is using fact checkers and flagging false information. YouTube and Twitter will be relying more heavily on artificial intelligence and machine learning to monitor their platforms by automatically hiding content deemed harmful by the algorithm.

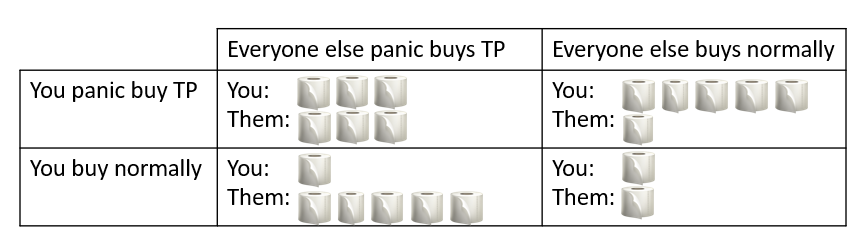

While it is important to remain open-minded and consider all points of view, it is also important to scrutinize and be skeptical of the information that is being given to the general public. If something sounds off, it just may be, so take the time to examine it further. Fact check, identify reliable sources, and be aware of your own potential errors in decision making and opinions. This barely scratches the surface of what is happening with fake news, conspiracies, and more, but to sum up, please refrain from drinking bleach.  Jennifer Murphy, Ph.D., Founder & CEO Jennifer Murphy, Ph.D., Founder & CEO If we all remember one thing from the COVID-19 outbreak of 2020, it will be the Great TP Crisis that gripped the entire planet. Of all things we could be doing to hunker down, this is easily the least rational, but also the most meme-worthy. What is wrong with us? There are so many psychological phenomena that come into play in a crisis like a pandemic, you could write a book on them, but we're going to frame the TP Crisis using a contrived situation often used to research decision-making in social contexts called the "prisoner's dilemma." In this situation, two people are separated and given the following scenario: You are a member of a gang. The prosecutors have charged you with a serious offense, but only have enough evidence to convict you of a lesser one. You have been offered a deal wherein you can testify that the other committed the more serious offense. Alternatively, you can remain silent. The possible outcomes are: 1. If you and the other member betray each other, both of you will serve 2 years in prison. 2. If one of you rats out the other, that one will be set free, and the other will serve 3 years in prison. 3. If neither of you rat out the other, both of you will serve 1 year in prison. What do you do? The "right" answer, from a self-preservation perspective, is to rat your partner out. Sure, you might have to do 2 years in prison, but it avoids the worst possible scenario. To put this in toilet paper terms: Let's say you normally buy enough TP to last a month, but because this is an emergency, you want to make sure you have enough for several months. Regardless of how everyone else behaves, it's in your personal best interest to hoard it. If other people are panic buying, there will be less for you, and if other people are not panic buying, then more TP for you! When placed in an experimental setting and given one chance to play this game, most people will make a rational choice and buy too much TP. In these situations, participants are told they don't know the other person, and that person will never be able to retaliate. However, when people are asked to play out this scenario over multiple instances with feedback about how the other person responded, cooperative behavior begins to emerge. This paradigm, the "iterative prisoner's dilemma," has been used to study cooperative and altruistic behavior. In these cases, the best case scenario is usually a "tit for tat" strategy in which case you do whatever the other player does on the previous round. However, that depends upon what the other person does. Winning the long game requires paying attention to their strategy and predicting their next move, which is not something any of us have the capacity to do in a toilet paper crisis scenario.

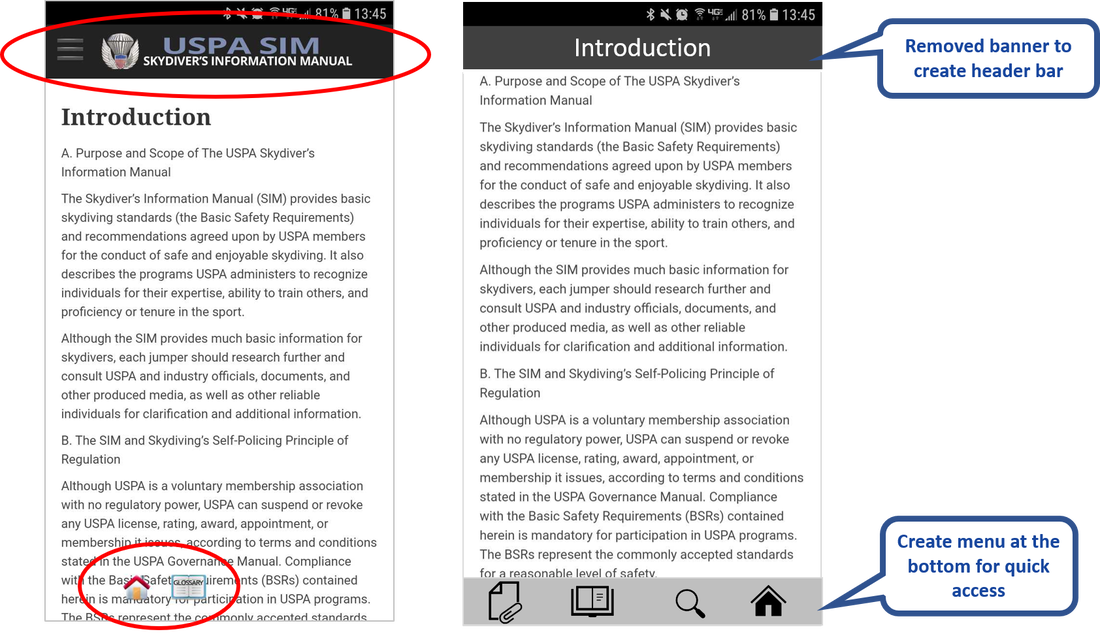

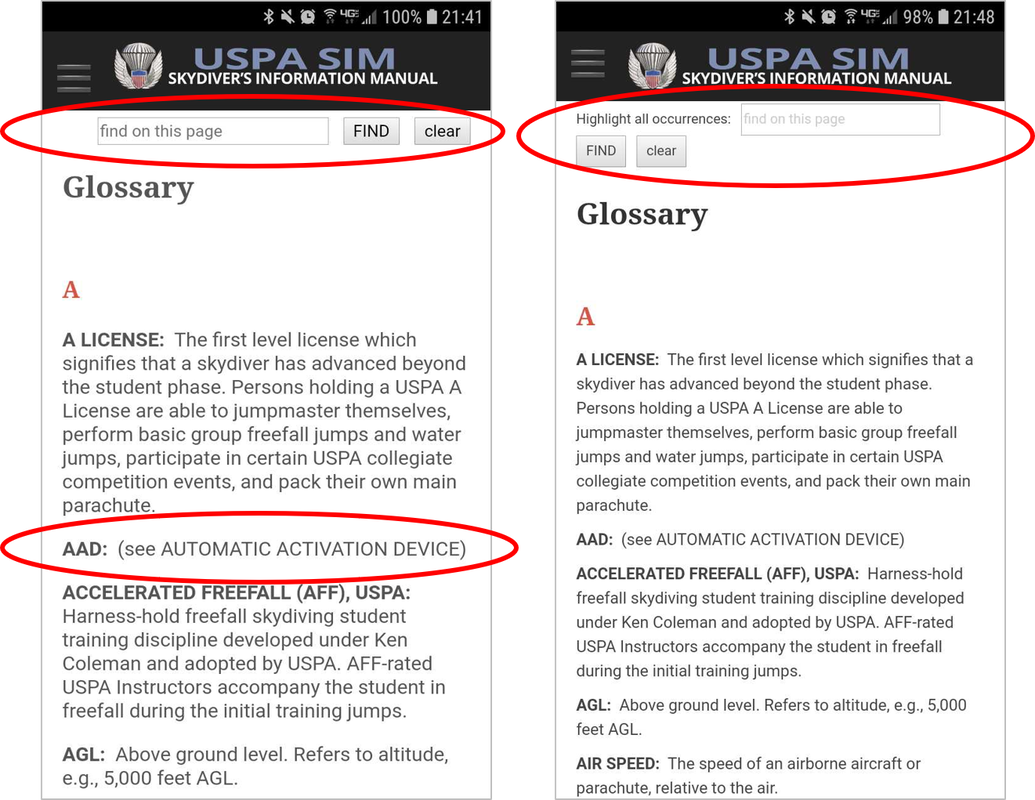

What's the takeaway here? Hoarding TP is not in everyone else's best interest, but it certainly is in yours at least in the short term from a game theory perspective. I'm not endorsing hoarding anything, but of all the seemingly irrational things you could do during these unprecedented times, if you find yourself with the urge to panic buy TP, at least you know you're not totally nuts for doing it.  Julian Abich, Ph.D., Sr. Human Factors Engineer Julian Abich, Ph.D., Sr. Human Factors Engineer I became a recreational skydiver in June 2016. Why would I learn to jump out of a perfectly good airplane you ask? Well, because it's fun (See Figure 1)!!! And if you haven't done it yet, I highly recommend you do! But I am not here to convince you to go. What I do want to briefly write about is my user experience with the new United States Parachute Association (USPA) Skydiver's Information Manual (SIM) mobile app (that's a mouthful). The purpose of the USPA SIM is to provide the basic skydiving standards, policies, training programs, and recommendations for safe and enjoyable skydives (USPA SIM, 2018). What they have done is take 200+ pages of content and create a mobile app. It was released this year (February 2020). As is the case for most professionals in the human factors or usability fields, it's hard to not immediately critically evaluate any piece of software or technology that dare cross my path. I won't get too far into the weeds, but I do want to highlight a few issues I came across and provide recommendations for improvement. I say these things not to hurt, but because I want this app to be a success. Let's dive in! [Ba-dump tsss]  Figure 1. Me doing a backflip during accelerated freefall training Figure 1. Me doing a backflip during accelerated freefall training  Figure 2. Splash screen mockup Figure 2. Splash screen mockup When the app is first launched it goes directly to the introduction content. This content is exactly was is present in the digital/hardcopy manuals. Surprisingly, there is no splash screen (same thing as launch or startup screen). It may seem trivial, but there are millions of apps on the market, so first impressions are important to keep users intrigued and wanting to continue further. Adding a simple splash screen can help reinforce the identity of the organization or brand. It also helps initiate the user's journey. A simple suggestion is provided in Figure 2. On the first page you can see there are two floating icons on the bottom of the screen (Figure 3: Left). I assume the house icon is supposed to bring you to a home screen, but this introduction page seems to be the home screen. And if you navigate to any other content in the app, these icons no longer exist. I would expect the home screen icon to bring me to an organized dashboard or menu of content. The second icon says 'glossary' and if tapped it does bring you to a glossary. But the icon is transparent and hard to read over the other text in the background. Simple solution is to remove them unless research was conducted, via front-end analysis or usability evaluation, and identified users really want these items quickly accessible. If so, then dedicate a space on screen for it to always be present. A recommendation is to add a visible menu at the bottom of the screen (Figure 3: Right). This menu could also be programmed to hide, yet still be easily accessed with a quick swipe up. This will eliminate the need for the hamburger menu currently at the top. Other functional icons can be placed here as well, such as a search icon and an appendix icon (both of which are currently accessed from the hamburger menu icon). Another suggestion is to remove the banner across the top with the USPA SIM name and logo. Users don't need to be constantly reminded that they are in the app. They will know because of their interaction with the content. Either remove this completely to create more space for content or use it as a header bar to provide context for the content on that page (Figure 3). I somehow came across two different versions of the glossary depending on how I navigated to it. When opening the app, if you click on the hamburger menu (the icon with three stacked horizontal bars on the top left) and choose the glossary it takes you to the one on the left in Figure 4. But if you leave it and access it again, it changes to the one on the right in Figure 4. Differences are seen in the search bar and font size of glossary terms. Choose one version and make sure it’s the only one in the app. Regarding the search bar function, all it does is highlight the text in the glossary, which can only be seen when you scroll through the terms. It does not compile a list of the search results with links to those specific terms that contain the searched words. Also, there are some glossary terms that suggest to see another term (Figure 4: Left). These suggestions should be hyperlinks to the indicated glossary term. There are a few other issues regarding the app functionality that I could address, but I want to discuss a more important issue. It's more of a concern about the overall app. I've experienced this with many other apps, especially education focused ones. Very often customers want to create a mobile app as a way to support and promote mobile learning. And for some reason, they take their textbooks, manuals, etc. and create a mobile app version of it. So instead of leveraging the technological functionality that is offered with a mobile app, the app is forced into the format and structure of a textbook. With a mobile app, the content can be better organized for easy and fast access. 200+ pages of content is a lot, so break it down into smaller groups to facilitate consumption and retention of information (i.e. microlearning). Hyperlinks can help users navigate quickly to content both within and outside of the app. Graphs, tables, figures, and illustrations that work in a textbook won't always work in a mobile app. This is usually because of size and legibility. Rework them into a mobile presentable form and take advantage of the use of animations and videos to help convey content. There are quizzes and study sheets contained within the content. Makes those much more easily accessible, rather than have them buried within the extensive amount of content. My conclusion is that there is an opportunity to provide the skydiving community access to all of this updated important information in an easily accessible and portable format. Work with a team that understands instructional design, training, and usability. They (we) can help you design an engaging app that effectively helps new and current skydivers learn and retain this information. Not only will it help improve usage, it will contribute to the safety of the sport. Blue skies! Recommendation Recap:

References:

United States Parachute Association (USPA) (2018). Skydiver's Information Manual. Retrieved on Feb 20, 2020 from https://uspa.org/SIM. USPA SIM (Android): https://play.google.com/store/apps/details?id=org.uspa.app USPA SIM (Apple): http://appstore.com/apple/uspasim  Julian Abich, Ph.D., Sr. Human Factors Engineer Julian Abich, Ph.D., Sr. Human Factors Engineer We started the year flying high by attending the SciTech 2020 Forum & Expo hosted by the American Institute of Aeronautics and Astronautics (AIAA) in the City Beautiful (a.k.a. Orlando, FL). I had two goals: find presentations on human factors topics and meet new folks within the field that are conducting training with immersive technology. After keyword searching through the digital program, I found two sessions called Augmented and Virtual Reality Technology - Human Factors and Training. Killed two birds with one stone or rather knocked out two goals with one session (weak pun). I was excited because in previous years there were only a few human factors sessions because SciTech is heavily focused on aerospace engineering. It was interesting to see how the use of immersive tech has been spreading throughout many fields and has gained importance within this domain. In one of the sessions, a presentation was given on NASA's Virtual Lab (VR) that discussed the history of the lab, the programs they've supported, and future plans. My one question was (and will likely always be) how do you show the effectiveness of using immersive tech and the benefits they support. And I was shocked by the answer that they don't do it. Not because they don't want to, but because they often don't have the resources (e.g. time, money, personnel, etc.). What continues to drive their work is the validation they receive from the astronauts. I feel this point is often overlooked, getting end-user feedback as a level of validation. And in this case, the end-users are also subject matter experts (SMEs) who can provide insight into how well the VR training will likely transfer into the real-world.

Another interesting talk was about Augmented Eye, which is a pilot instructor support tool. Instead of developing augmented reality (AR) technology specifically for trainees, they created a support tool that allows instructors and trainers to view ocular behavior of pilot trainees during training scenarios in real-time. By using the AR system combined with eye tracking, instructors can now see where pilots are looking, allowing them to immediately provide feedback or correct behaviors before ineffective strategies are developed. During the second session, there was a presentation on using VR to create what was being termed fused reality. It relates to the concept of mixed-reality by using external cameras on a VR head mounted display (HMD) to project real-world feeds within the display and then adding virtual objects within the projected environment. What really struck me as amazing is that they tested their proof-of-concept with a pilot flying a real-plane (don't worry they had a safety co-pilot). They were able to show how various tasks can be trained, such as formation flying, aerial refueling drogue tracking, and runway approach practice in mid-air, without the need of flying expensive aircrafts or requiring other physical aircrafts to be present. And further, no simulator sickness was felt. This was likely due to the low latency and true sensations felt by flying the real plane. I can see this utilized as a transition training phase between full simulator and real-world training. And although I may be biased because I am a recreational skydiver, I was very excited to learn about PARASIM. It is a parachute simulator used for pre-jump exposure and emergency egress training procedures. Users wear a VR HMD and are strapped into a parachute harness that is tethered to a frame which allows the user to experience free fall in a horizontal position. When the skydiver pulls the ripcord (since this is used for military training) to release the main canopy (a parachute for those non-divers), the user's legs are released to adjust the body back into a vertical position as it would be under canopy. Again, my question was, have you conducted any transfer of training or effectiveness studies? And again, no, they have not had the resources to support it, but they do have professional skydivers on staff that provide expert knowledge and feedback which helps validate the training content and platform physics. All in all, SciTech continues to expand and showcase some of the latest work in the aeronautics and astronautics fields with an ever increasing use of immersive technology. Looking forward to see what will be presented next year. How are you taking innovative approaches to implement immersive technology for training? |

AuthorsThese posts are written or shared by QIC team members. We find this stuff interesting, exciting, and totally awesome! We hope you do too! Categories

All

Archives

April 2024

|

RSS Feed

RSS Feed