|

In military training environments, critical incidents such as injury, friendly fire, and non-lethal fratricide may occur. Quantitative performance data from training and exercises is often limited, requiring more in-depth case studies to identify and correct the underlying causes of critical incidents. The present study collected Army squad performance, firing, and communication data during a dry-fire battle drill as part of a larger research effort to measure, predict, and enhance Soldier and squad close combat performance. Soldier-worn sensors revealed that some quantitatively rated top-performing squads also committed friendly fire and a fratricide. Therefore, case studies were conducted to determine what contributed to these incidents. This presentation aims to provide insight into squad performance beyond quantitative ratings and to underscore the benefits of more in-depth analyses in the face of critical incidents during training. Squad communication data was particularly valuable in diagnosing incident root causes. For the fratricide incident specifically, the qualitative data revealed a communication breakdown between individual squad members stemming from a non-functioning radio. The specific events leading up to the fratricide incident, and the squad’s response, will be discussed along with squad communication patterns among high and low-performing squads in the context of various critical incidents. We will examine how the conditions surrounding critical incidents and the underlying causes of those incidents can be recreated and manipulated in a simulated training environment, allowing instructors to control the incident onset and provide timely feedback and instruction.

0 Comments

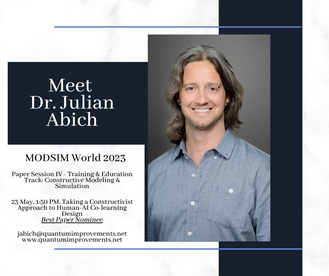

Artificial intelligence (AI) can facilitate personalized experiences shown to impact training outcomes. Trainees and instructors can benefit from AI-enabled adaptive learning, task support, assessment, and learning analytics. Impacting the learning and training benefits of AI are the instructional strategies implemented. The co-learning strategy is the process of learning how to learn with another entity. AI co-learning techniques can encourage social, active, and engaging learning behaviors consistent with constructivist learning theory. While the research on co-learning among humans is extensive, human-AI co-learning needs to be better understood. In a team context, co-learning is intended to support team members by facilitating knowledge sharing and awareness in accomplishing a shared goal. Co-learning can also be considered when humans and AI partner to accomplish related tasks with different end goals. This paper will discuss the design of a human-agent co-learning tool for the United States Air Force (USAF) through the lens of constructivism. It will delineate the contributing factors for effective human-AI co-learning interaction design. A USAF maintenance training use case provides a context for applying the factors. The use case will highlight the initiative of leveraging AI to help close an experience gap in maintenance personnel through more efficient, personalized, and engaging support.

Jennifer Solberg, Ph.D., Founder & CEO Jennifer Solberg, Ph.D., Founder & CEO I have two younger brothers, and once a week, the Solberg kids have a phone call. We each hold management positions in the technology world, so we swap notes about work a lot. We may be one of the few families with a running joke about Kubernetes. After our call the other day, my brother Mike sent me this article from the National Bureau of Economic Research, and I have been geeking out over it ever since. From what I can tell, it’s one of the first studies on how generative AI can improve an organization’s effectiveness – and not just in terms of its bottom line. Something I’ve been thinking about is how to train empathy. If you work in user experience, you understand how important empathy is to good design. Often, we end up working with solutions that suffer from “developer-centered design,” where features are built to check a box while minimizing the work for the development team. Also, we see “stakeholder-centered design,” where software development happens to impress someone with a pile of money. At the end of the day, if the people who need your solution can’t figure out how to use it, none of the rest of it matters. Empathy means putting yourself in someone else’s shoes. More importantly, it involves caring about other people, which seems hard to come by these days. Wouldn’t it be great if we could make something that teaches people how to do that? For a long time, I wondered whether virtual reality could show you someone else’s perspective, and I still think it could. This study shows there may be a different way. The study took place in a large software company’s customer support department. Working a help desk is a job where empathy is key to success. Not only do you have to be able to solve an irate and frustrated customer’s problem, you have to ensure they have a positive experience with you. In this research, customer support agents were given an AI chat assistant to help them diagnose problems but also engage with customers in an appropriate way. The assistant was built using the same large language model as the AI chatbot everyone loves to hate, ChatGPT. The assistant monitored the chats between customers and agents and provided agents real-time recommendations for how to respond, which agents could either take or ignore. As a result, overall productivity improved by almost 14% in terms of the number of issues resolved. Inexperienced agents rapidly learned to perform at the same level as more experienced ones. The assistant was trained on expert responses, so following its advice usually gave you the same answer an expert would give.

Here’s where it gets really interesting: a sentiment analysis of the chats showed that as a result of using the assistant, there was an immediate improvement in customer sentiment. The conversations novice agents were having were nicer. The assistant was trained to provide polite, empathetic recommendations, and over a short period of time, inexperienced agents adopted these behaviors in their own chats. Not only were they better at solving their customers’ problems, but the tone of the conversation was overall more positive. The agents learned very quickly how to be nice because the AI modeled that behavior for them. As a result, customers were happier, management needed to intervene less frequently, and employee attrition dropped. The irony of AI teaching people how to be better human beings is palpable. Are the agents that used the assistant more empathetic? We don’t know, but from a “fake it until you make it” perspective, it’s a good start. That aside, this study is an example of how this technology could help people with all sorts of communication issues function at a high level in emotionally demanding jobs. Maybe we should spend a little more time thinking about how it could help many people succeed where they previously couldn’t and focusing less on how it’s not particularly good at Googling things.  Julian Abich, Ph.D., Senior Human Factors Engineer Julian Abich, Ph.D., Senior Human Factors Engineer An estimated 602,000 new pilots, 610,000 maintenance technicians, and 899,000 cabin crew will be needed worldwide for commercial air travel over the next 20 years (Boeing, 2022). That’s about 30,000 pilots, 30,000 maintenance technicians, and 45,000 cabin crew trained annually. Additionally, urban air mobility is creating a new aviation industry that will require a different type of pilot and technician. It's clear there is a demand across the entire aviation industry to turn out new personnel at an increased rate. The demand should not just be met by numbers, but also by knowledge and experience. But how can you train faster, cheaper, and yet still maintain (and exceed) current high standards? Is the answer extended reality (XR) technology? (Hint: that's part of the solution). These are the problems being tackled by the aviation community and discussed at the World Aviation Training Summit (WATS). This year was the 25th anniversary of WATS. I've been attending and presenting at WATS for the past three years. In that time, I've seen the push for XR technology met with valid concerns for safety. If you know me, then you've heard me harp on the need for evidence-supported technology for training, so I can appreciate that stance. Technology developers have an ethical responsibility to conduct or commission the appropriate research before boasting claims of training effectiveness, efficiency, and satisfaction. There are many companies that came to the table with case studies showing the training value of their solution. Other companies want to do the same but may need more opportunities or research support. On the other side, some commercial airlines have been conducting XR studies internally, but the results tend to stay within the organization. The regulating authorities (e.g., FAA, ICAO, etc.) need the most convincing. They need to see the evidence and clearly understand where XR will be implemented during training. There may be an assumption that XR is the training solution but XR is just part of a well-designed, technology-enabled training strategy. It was great to hear many presenters convey this same message and reiterate the need for utilizing a suite of media suitable for developing or enhancing the necessary knowledge, skills, and abilities. We need collaboration between researchers, tech companies, and airlines, sharing research results to show the value of XR at all levels within the aviation industry. Otherwise, the work will continue to be done in silos, efforts will be duplicated, progress will be stunted, and personnel demands will fail to be met. We all need to contribute to the scientific body of knowledge if we want to move the industry in the direction needed to adapt and prosper.

Reference: Boeing. (2022, July 25). Boeing forecasts demand for 2.1 million new commercial aviation personnel and enhanced training. https://services.boeing.com/news/2022-boeing-pilot-technician-outlook |

AuthorsThese posts are written or shared by QIC team members. We find this stuff interesting, exciting, and totally awesome! We hope you do too! Categories

All

Archives

June 2024

|

RSS Feed

RSS Feed