Julian Abich, Ph.D., Senior Human Factors Engineer Julian Abich, Ph.D., Senior Human Factors Engineer Why collect subjective data from the end-users when evaluating a training device? I was asked this question at a conference last month, almost as if subjectivity is a dirty word. The short answer is that if your users don't like the training device, they likely won't use it. If they don't use it, you won't get the objective data you may be looking for. The term "like" does not necessarily mean the training experience was pleasant. For instance, Soldiers, firefighters, surgeons, etc., must train under difficult, often intolerable conditions to prepare for the real-world challenges of their job. "Liking" the training, in such cases, means that the trainees see the value in preparing them to do their job successfully. When we collect subjective data, we also ask users if their expectations were met, what their emotional responses were from their experience, and most importantly, why. If we only gather objective data, such as the time to complete a task or the number of errors made, then we are only getting half the story. For example, why did it take so long to complete the task and why were certain errors made?  On a project for the U.S. Air Force, QIC's role was to conduct usability and user experience evaluations on a virtual flight simulator (Abich & Sikorski, 2022; Abich, Montalbano, & Sikorski, 2021). One of the most compelling responses I heard from an instructor pilot as he walked into the room was, "This looks like training." I reflected on that and thought, "What does it mean to look like training? How does the user's initial impression affect the feedback provided? How does the design impact their motivation to give the training device a chance? And why should any of this matter if the training device does what it's supposed to do?" The answers to these questions are subjective as end-users apply their unique expertise, experience, and expectations in evaluating the device. They are the ones that will have to use the devices and can see the value or potential shortcomings. If their lives depend on the skills, you can bet they will be particularly critical of any new training device. So next time you do a training device evaluation, embrace the subjective and don't worry about getting a little dirty. Otherwise, you may be missing out on some highly valuable feedback.

0 Comments

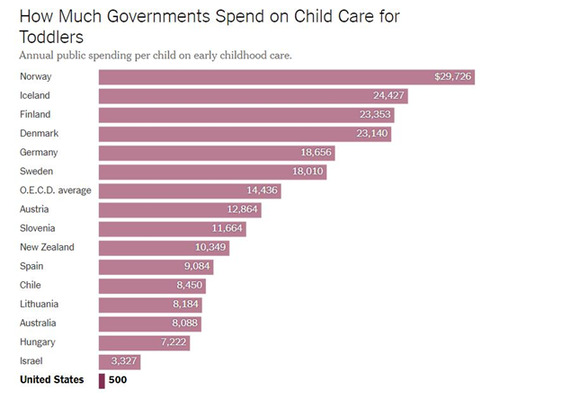

Shabnam Mitchell, PMP, PMI-ACP Shabnam Mitchell, PMP, PMI-ACP Happy International Women's Day! As a mother working in the defense industry for a female-owned company, I must admit that today is a mixed bag of emotions. For starters, this article from last month lists the high-profile glass-ceiling-breaking women that have chosen to leave the workforce, stating, “The pattern has the potential to unwind decades of progress toward gender equity and increased female leadership in the workplace." Add to that the recent setbacks to policy that further disadvantage women such as reductions in benefits to lower-income families primarily led by single mothers. During the pandemic, over 2 million women left their careers to care for their families due to schools shutting down. One in 3 childcare centers permanently closed down. We are barely getting back into the stride of returning to pre-pandemic employment numbers, but the field is far from level. Eve Rodsky’s book and consequent documentary, Fair Play, further illustrate how the US has the worst family-friendly public policy in the developed world. For example, US & Papua New Guinea are the only two countries in the world with no federal paid maternity leave. The picture is rather stark. It's no surprise that women are burned or burning out. Our society does not have the infrastructure to support women. According to lawyer and US Rep Katy Porter, "There are lots of things we do in government that cost a lot. There is a hidden message there; it's just too expensive to support women. Wouldn't it be just cheaper if we just keep letting women do it all for free?" Thankfully, at QIC, I report to the COO who meets with me weekly to ensure not only that my workload is balanced, but that I have the resources I need to be successful, including the flexibility necessary to maintain a work-life balance. I wish I could say this took having tough conversations to achieve, but the truth is, our company culture not only encourages us to be upfront and authentic, it requires it.

While there is certainly more work to be done to support women, especially mothers, I am proud of the conversations I hear taking place around me. We are finally vocalizing so much of what has been deeply felt for too long. Looking ahead, I hope we can turn this one-day celebration into a year-round push for equality. If any of this interests you, check out this study from the LeanIn organization for more information and this site for ways to get involved. The USAF has funded the development of an AI-driven co-learning partner that monitors student learning, predicts learning outcomes, and provides appropriate support, recommendations, and feedback. As a trusted partner, AI co-learning agents have the potential to enhance learning though the challenge is developing an evolving agent that continually meets the learner’s needs as the learner progresses toward proficiency. Taking a user-centered design approach, we derived a series of heuristics to guide the development of an AI co-learning tool for adoption and sustained use then mapped technical feature recommendations onto each heuristic. United States Air Force (USAF) Tactical Aircraft Maintainers are responsible for ensuring aircraft meet airworthy standards and are operationally fit for missions. Aircraft maintenance is the USAF's largest enlisted career detail, accounting for approximately 25% of their active duty enlisted personnel (US GAO, 2019). The USAF has successfully addressed maintenance staffing shortages in recent years, but the challenge has shifted to a lack of qualified and experienced maintainers (GAO, 2022). To address this issue, the USAF is seeking innovative ways to increase maintenance training efficiency, such as using artificial intelligence (AI) in the training environment (Thurber, 2021). Specifically, the USAF has funded the development of an AI-driven co-learning partner that monitors student learning, predicts learning outcomes, and provides appropriate support, recommendations, and feedback (Van Zoelen, Van Den Bosch, Neerincx, 2021). The AI co-learning agent goes beyond being a personalized learning assistant by predicting learning outcomes based on student data, anticipating learner needs, and adapting continuously to meet those needs over time while maintaining trust and common ground. As a trusted partner, AI co-learning agents have the potential to enhance learning by dynamically adapting to student needs and providing unique analytical insights. The challenge is developing an evolving agent that continually meets the learner’s needs as the learner progresses toward proficiency. Our literature review uncovered that designing an effective AI co-learning tool for initial adoption and sustained use requires observability, predictability, directability, and explainability (Bosch et al., 2019). Establishing trust and common ground are important factors that can impact the learner’s confidence in the agent and influence tool usage. Taking a user-centered design approach, we derived a series of heuristics that guide the development of an AI co-learning tool based on the identified factors. We then mapped technical feature recommendations onto each heuristic, resulting in 10 unique and modified heuristics with associated exemplar feature recommendations. These technical features formed part of the design documentation for an AI co-learning tool prototype. The research-driven design heuristics will be presented along with the technical feature recommendations for the AI co-learning tool. The audience will gain practical insight into designing an effective human-AI co-learning tool to address training needs. The application goes beyond the immediate USAF need to other services, such as the U.S. Navy, where maintainer staffing has declined gradually (GAO, 2022).

Shabnam Mitchell Shabnam Mitchell 2023 is here, and I’m not ready. As the project manager at QIC, I ensure that our project objectives are met using techniques rooted in the Agile methodology. No project has felt too daunting to manage nor a team too challenging to collaborate with. Yet here I am, staring January in the face, terrified of how I will manage my family and household. I need a plan. I need help. There’s going to be a crib-to-bed transition. Potty training. Swimming, ballet, soccer, gymnastics, skating, and karate classes. It’s. All. Too. Much. How to stem the hysteria? How to tackle this overwhelm? I repeat I’m a capable, successful project manager. Can’t I use the same tools at home to bring order to the chaos? As it turns out, I’m late to this revelation. According to Bruce Feiler (TED talk), Agile was just what his household needed to “cut parental screaming in half.” I was simultaneously floored and elated. I had spent a decade working on mastering a framework that I could have been using in my personal life all along. I will spare you the history and details of Agile, but if you’re interested, here is an excellent place to start. In short, the purpose of Agile is to make progress in an ever-changing environment, utilizing an empirical process to make decisions to ensure we move the needle in the right direction. As we understand at QIC, R&D contracts often come with many unknowns, having to meet tight timelines and budgets while leaving room for exploration, collaboration, and adaptation. Using Agile principles, our team meets at predictable regular intervals to regroup and connect to share progress and information to continue to improve and deliver value. On the home front, my husband and I connect after the kids’ bedtime to have a warming beverage and look at the day ahead. This often involves laughing over who cried more, us or the kids, and ways to reduce tears. This is similar to the sprint retrospective when the team discusses ways to work better together to avoid recurring problems. It is also like the daily standup when the team shares what they will be doing for the day, enabling them to communicate roadblocks early, and assist where necessary. Granted, we may not have much progress involving our 2yo and 5yo kids in these discussions despite being key stakeholders; I would like to think that very soon they will be. Empowering them to participate in the process will hopefully lead to cooperation and a happy home life. Agile applied in the home is a compelling idea, one that I’m willing to try this year. If I fail, at least I’ll be failing fast! Would you try any of these approaches to household management in your personal life? What skills do you hone in your workplace that you could apply at home?

Dr. Jennifer Solberg, Founder & CEO Dr. Jennifer Solberg, Founder & CEO Last week, Congress blocked a $400 million award to Microsoft for the purchase of nearly 7000 Integrated Visual Augmentation System (IVAS) systems for the Army. The award would have followed the Army’s investment of $125 million to develop the Hololens-based augmented reality headset. The issue? IVAS proved to be spectacularly problematic in user testing last year, with 80% of Soldiers reporting physical discomfort, eye strain, nausea, and other issues within the first three hours of use. Instead, they awarded a $40 million contract to develop yet another version of IVAS…to Microsoft, the same company that delivered the current system. On the one hand, as one who conducts these kinds of end user research, it’s gratifying to see the Army doing extensive user experience testing before deploying something this invasive and frankly, potentially dangerous. Using a Hololens, or any other augmented reality headset, in a controlled environment is one thing. Having your field of view occluded by constantly changing data streams while you’re being shot at is potentially a human factors nightmare. At the very least, if Soldiers don’t like it, they won’t use it. And it turns out, they don’t. In addition, the methodology of this testing has been criticized by the Inspector General. User research is critically important when you’re developing a capability your users will interact with on a daily basis. The process should start before the prototype stage, though. The impetus for IVAS did not come from the boots on the ground; it’s the result of nearly 20 years of research and development into head-worn augmented reality. It’s not clear whether Soldiers were ever asked “What are your problems?” before they were asked “Do you like this thing we’ve made to solve them?”

We see this problem a lot working in the training technology space. We’re regularly asked to develop solutions without talking to the intended users until a prototype is developed. I get it. Their time is valuable. They’re hard to get a hold of. There’s a lot of bureaucracy involved. We don’t need to talk to them, we’re the experts. Yes, we’re the experts in how to apply the science behind why things work, and we’re the experts in designing the solution. We’re also the experts in evaluating the solution. But we can’t be the experts in how people do their jobs, the problems they have, the barriers to solving them, and their work environment without listening to them. Because it’s never as straightforward as it seems. Where does IVAS go from here? Some would argue there are too many technical barriers to overcome right now. Others would argue that the contract should be recompeted instead of continuing to throw money at a sunk cost. I would argue the Army should take a step back and ask if this solution broadly is the right one to solve the Infantry’s problems today.  Jennifer Murphy, Ph.D., Founder & CEO Jennifer Murphy, Ph.D., Founder & CEO As AI technology continues to advance, we are seeing more and more applications in the research and scientific fields. One area where AI is gaining traction is in the writing of research reports. AI algorithms can be trained to generate written content on a given topic, and some researchers are even using AI to write their research reports. While the use of AI to write reports may have some potential benefits, such as saving time and providing a starting point for researchers to build upon, there are also significant ethical concerns to consider. One of the main ethical issues with using AI to write research is the potential for bias. AI algorithms are only as good as the data and information that is fed into them, and if the data is biased, the AI-generated content will be biased as well. This can lead to the dissemination of incorrect or misleading information, which can have serious consequences in the research and scientific fields. Another ethical concern with using AI to write research reports is the potential for plagiarism. AI algorithms can generate content that is similar to existing work, and researchers may accidentally or intentionally use this content without proper attribution. This can be a violation of copyright law and can also damage the reputation of the researcher and the institution they are associated with. Additionally, using AI to write research reports raises questions about the ownership and control of written content. AI algorithms can generate content without the input or consent of the individuals who will ultimately be using it. This raises concerns about who has the right to control and profit from the content that is generated. Overall, while the use of AI to write research reports may have some potential benefits, there are also significant ethical concerns to consider. It is important to carefully weigh the potential benefits and drawbacks of using AI in the writing of research reports and to consider the potential ethical implications of this technology. I didn't write a word of that. It was produced by ChatGPT ( https://chat.openai.com/), an AI chatbot that launched to the public on November 30. Since its launch, it's been at the forefront of the tech news cycle because it's both very good and very easy to use. Yesterday, I asked it to write a literature review on a couple of topics and pasted the results in QIC's Teams. They all got a weird, uneasy feeling reading it; we all joke about the day "the robots will take our jobs," but we hadn't realized that our jobs were on the list of those that could be so easily automated. Is the copy above particularly eloquent? No. Does it answer the mail for a lot of things? Yes. And sometimes, as they say, that's good enough for government work.

The QIC crew wasn't alone in their unease. Across the internet, authors are writing to minimize the impact of technology like this or demonize it. If you try hard enough, you can make it do racist things. It'll help kids cheat at school. But that's not really why we react to it the way we do, is it? It's the realization something we thought made us human - the ability to create - is not something only we can do. In fact, it's not even something we can do as efficiently as something that is not only inhuman, it's not even alive. While I was playing with ChatGPT, many of my friends were posting AI-generated stylized selfies using the Lensa app. Its popularity reignited a similar discussion in the art community. Aside from the data privacy discussion we've been having since the Cambridge Analytica fiasco, artists are rightly concerned about ownership and the ability to make money from their work. At the core, though, it's the same fear that if AI can do your job, where does that leave you? When robots were anticipated to take over the world, many of us expected they would take the jobs we didn't want, like fertilizing crops and driving trucks. These were supposed to be the jobs that are physically exhausting, dangerous, and monotonous. They weren't supposed to be the ones that we went into thousands of dollars of student loan debt to be qualified to do. We didn't think it would be so easy for a machine to do something that when we do it reflects our feelings and thoughts. The phrase “intellectual property” presupposes an intellect, and an intellect presupposes a person. As one who tries to maintain cautious optimism about the future of technology, I find it exciting that I may live to see the day when AI is far more efficient at most things than I am. Obviously, there’s a lot we have to consider from an ethics perspective. I’ve watched the Avengers enough times to appreciate the potential for Ultron to make decisions we might not like as a species. But that day is coming, and it’s important to have those conversations now. On a broader level, it’s time we start thinking about what it means for us as creative people, what we value, and why we are special on this planet. Because I do believe that we are.  Jennifer Murphy, Ph.D., Founder & CEO (Taylor's Version) Jennifer Murphy, Ph.D., Founder & CEO (Taylor's Version)

Unless you either live under a rock or don’t have a relative/friend/boss who’s a Swiftie, you know that Taylor Swift concert tickets went on sale this week. You probably also know it was a catastrophe, with the blood and tears of millions of Swifties on Ticketmaster’s hands. It was enough to divert an entire news cycle away from Elon Musk. I’m here to tell you how in the future, this sort of thing might not happen.

@repenthusiasmts / Via Twitter: @repenthusiasmts @repenthusiasmts / Via Twitter: @repenthusiasmts

Here’s what happened: Prior to the presale, Swifties registered as “Verified Fans” through a partnership between Ticketmaster and Taylor’s management team, Taylor Nation. From Ticketmaster’s reports, over 4 million fans signed up. The night before the presale, 1.5 million of them were notified by text and email that they won the presale lottery and were given access codes to buy tickets the next day. If you bought merchandise from the Taylor Swift store using the same email address as your Ticketmaster account, this helped your chances. I am both simultaneously proud and embarrassed to say that I have dropped enough money on that website to score a presale code.

The events of the following morning will live forever as one of the darkest of days in the annals of Swiftie history. Over the course of the morning, 1.5 million fans tried to buy tickets simultaneously – along with 12.5 million other people…and resale bots. (If you want to learn about how these bots work, this is a good primer.) The site crashed in spectacular fashion, codes did not work, and many irate Swifties did not score tickets. Moments later, ticket resale sites like StubHub and Vividseats immediately showed plenty of inventory – marked up to $30,000 a ticket in some places. The whole point of fan verification was to prevent something like this from happening. So, what went wrong?

This is not a post about that. This is a post about how it could have gone. Non-fungible tokens, or NFTs, have a bad rep in some circles because they are often associated with “tech bro” culture and extremely ugly AI-generated cartoon apes. However, they are useful for situations in which you want to tie a digital asset to its owner. If concert tickets are issued on NFTs, the organizer would have a record of the owner of the ticket on a blockchain, and more importantly, would know when that ticket is sold to someone else and how much it sold for. Keeping these records could limit price gouging, scalping, and ticket fraud. Because the ticket resides on a blockchain, the organizer could set resale cost limits and conditions.  my1galaxy via redbubble my1galaxy via redbubble

There are other benefits to using NFTs for ticket sales. Because this technology moves quickly and efficiently, it may be less likely to crash a website. Artists could attach digital collectibles to tickets as souvenirs of the show. Tickets are potentially more securely stored digitally using ticket wallets tied to a single device. And paying for tickets could be more seamless, too. Ticketmaster sees the value, too, and has partnered with blockchain providers for other kinds of events.

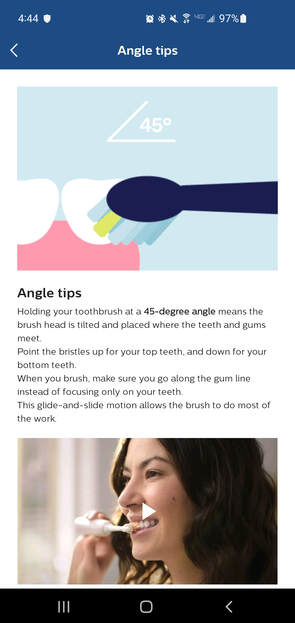

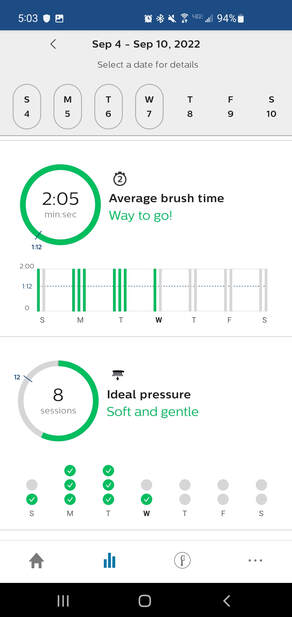

It'll be a while before the world buys Taylor Swift tickets on blockchain, but there’s a good chance it’ll be better than not being able to buy Taylor Swift tickets at all. I, for one, am ready for it.  Dr. Eric Sikorski, Director of Programs & Research Dr. Eric Sikorski, Director of Programs & Research I have been brushing my teeth for 40 years, which is approximately 1,000 hours of practice. Not exactly the 10,000 hours to achieve mastery, according to K. Anders Ericsson, although seemingly sufficient for a menial task. I didn’t think much about my toothbrushing proficiency, but if pressed, I would have said it’s “good enough.” However, the last few trips to the dentist indicated otherwise. Nothing major, but clear signs that my technique was flawed. So, I had a choice to either continue to accept good enough or try to improve my performance. Yes, toothbrushing is a mundane task, but why not finally listen to the expert and do it better? I am an underperforming teeth brusher not because I’m incapable of performing the task but because I formed unproductive habits and lacked the motivation and tools to overcome those habits. I would buy an inexpensive toothbrush and then mindlessly move it around in my mouth for some reasonable amount of time twice a day. As a major step toward improvement, I bought an electric toothbrush (spurred on by my dentist, of course). At QIC, we know that in order to push yourself to the next level, you require insight into your performance. Not only do you need enough data, you need the right data. It must be presented to you in a way that’s easy for you to understand at a glance, and it must be coupled with feedback. Knowing you’re not performing at your peak level isn’t enough – you need to know what to do to fix it! I was pleased to see these features in the companion app to my toothbrush. The brush itself gives you brushing guidance through a timing feature and sensor. The downloadable app provides insights into your brushing habits, and importantly, gives you specific strategies you can use to improve. Feedback on brushing frequency, average duration, and performance is displayed after each session, along with corrective actions such as “slow down.” Taken together, this Cadillac of toothbrushes is designed to break unproductive habits, model and maintain the correct behaviors, assess performance, and provide feedback. From a human factors and training perspective, it’s quite impressive! Whether government or private industry, our customers are regularly faced with the choice of seeking opportunities for improvement over accepting good enough. Not about teeth brushing, necessarily, but for job tasks on which they are trained, practiced, and may not have to think much about. The most elite performers are motivated to improve even on the tasks that have become mundane. Despite the desire to constantly improve, how to improve may not always be clear to them. We, as human factors and training professionals, know how. Analyzing job tasks allows us to understand the negative habits, well-designed training and performance support can help break those habits, active participation and demonstrable results can motivate, and assessment and proper feedback can maintain performance. The fighter pilot, CEO, Navy SEAL, etc. choose to shun the good enough, whether in the mundane or extraordinary. It is part of what makes them great, and we can help focus that motivation to make them even greater. In the meantime, let’s start with brushing our teeth… properly.

Jennifer Murphy, Ph.D., CEO & Founder Jennifer Murphy, Ph.D., CEO & Founder I recently had the privilege of joining Coach Matt Doherty on his webcast where we talked about a variety of workplace training topics. My first conversation with him was probably one of the most memorable moments of my career to date, as it revolved around two of the things I care most about in the world: leadership and UNC basketball. For those of you who weren't blessed from birth to be a Tar Heel, he played on the 1982 National Championship team alongside Michael Jordan. He's coached at a variety of schools (including my alma mater, Davidson College), but he's best known for his three-year tenure as the head coach of the UNC men's basketball team. After the webcast, Coach sent me a copy of his book Rebound: From Pain to Passion as a token of thanks. I read it cover to cover the next day. In it, he discusses his rise as a coach to one of the most prestigious jobs in the game, the loss of that job, and how he was able to emotionally and professionally recover in the aftermath. He describes the feelings of loss, pain, and betrayal associated with falling off a high pedestal in an extremely public forum. I do not know many people who could recover from something that ego-shattering with grace. Inherent to leadership is learning to be comfortable with people watching and evaluating you. You're on a stage all the time, even on work-from-home Fridays and during happy hour. Even for those of us who like the limelight, it takes a toll. One thing we never talk about is how, as leaders, to manage emotional pain while we're on that stage. It's one thing for a team member to go through a hardship; leaders rally around them, give them the space and support they need, and are patient while life is uncertain. But when it's you? Do you tell your team what's going on, and risk freaking them out? Do you try to model strength in the face of adversity and act like nothing is wrong? Will they think you're making excuses? Will they be scared? Can you handle your whole world knowing that you're not OK?

Basketball teams play a lot of games, which is great for people like me who like to watch them. If you're the coach, that means you have the potential to take a lot of "Ls." In the government contracting space, we do too. I lose a lot more than I win, and that's hard. When you add personal loss on top of that, the cracks start to show. Throw in a pandemic, political tension, and everything else, and honestly, there are some bad days. How do I deal with it? I tend to share in the hopes that the crew will know when it's them, they're in a safe place to ask for help. I also think they have a right to know if I'm not at 100%, and they should understand that this job is hard because one day, it might be theirs. But it's also just as important for them to see me trying to take care of myself, giving myself some grace, and getting better. Is this the right way to handle it? I don't know. But I do know that the world isn't getting any kinder, and this should be a topic we talk about a lot more. Thanks to Coach Doherty for having the courage to write about his experience, and for sharing the lessons he learned from it. You can order Rebound: From Pain to Passion here: https://amzn.to/3Tl8vFV and watch to the Rebound Live Webcast here: https://coachmattdoherty.com/webcast/  Michael Schwartz, Ph.D., Human Factors Engineer I Michael Schwartz, Ph.D., Human Factors Engineer I I recently attended the 13th Applied Human Factors and Ergonomics (AHFE) Conference in New York City. Like a busy NYC street, the topics covered were an eclectic mix of human factors solutions highlighting advances in training, performance, safety, and usability in nearly every human endeavor. The presentations I saw at AHFE emphasized that human factors has embraced new technologies as tools for investigation and as objects of study. Here are just some of the interesting things at AHFE 2022:

Like the massive screens in Times Square, the above items were certainly attention-grabbing and my first reaction to much of what I saw was “cool.” Then, questions began to go through my mind as I started to think about what’s beyond this first impression. Is the alphabet instructional game enjoyable and thus motivating children to stay engaged? Is it intended to supplement parent-child interaction? Or replace it? The AR laser-based project system may improve productivity, but does it improve safety? Do the workers understand the system and enjoy using it? Are autopsies currently being done poorly and, if so...how? You get the idea.

The AHFE keynote address by Dr. Michael van Lent, President and CEO of Soar Technology, discussed the 4th Industrial Revolution. This revolution is brought about by a combination of technologies that afford the opportunity to blur the lines between the physical, digital, and biological, allowing new information, products, and services to emerge. This led me to think about technology's intended and unintended uses and how humans are changed simply because of their interactions with technology. Often, we focus on the obvious, albeit “cool”, intended use without considering secondary and even tertiary human-centered benefits and consequences. For instance, I hope that a children’s game for teaching the alphabet is motivational and effective while also promoting rich social interaction among parents and children. As human factors researchers, we should consider how to measure the unintended benefits and how humans are changed because of technology interaction. For example, how does adaptive lighting in automobiles influence not only driver emotions but also driver focus and reaction time? While no single study can examine every effect, intended or unintended, our methods and measures must be expanded to account for the ever-increasing complexity of human systems interaction. What are the types of questions you ask when you see new tech or research? Are you able to move beyond the cool? |

AuthorsThese posts are written or shared by QIC team members. We find this stuff interesting, exciting, and totally awesome! We hope you do too! Categories

All

Archives

June 2024

|

RSS Feed

RSS Feed