Dr. Jacquelyn Schreck, Human Factors Engineer Dr. Jacquelyn Schreck, Human Factors Engineer Catch up with Part 1: Morning Routine, first! “SEAA, what is going on outside?” I ask. “It appears there is construction taking place, and traffic is not being rerouted.” When I look outside, I am shocked to see that there is indeed a building being constructed where there used to be a large plot of land. This is land that was supposed to be turned into a park, but apparently, someone decided it would be better suited as an apartment complex. Obviously, this was not approved by the city, as the computers in these cars had no idea this issue would be here, and the city has an automated process that keeps people and cars up to date on the goings on. This land was previously said to be a dedicated park area, and I wonder if this building company has the approval to be building or if they are another company that has decided a plot of land would be better suited for something less green since it has become a priority to preserve what greenery we have left. What has changed is the citizens. We also care about keeping the environment healthy but apparently, this company does not. Sighing, I rest back against my seat and resume working for the remainder of my trip to the hospital.  Image created using Dall-E 2 Image created using Dall-E 2 When I arrive, SEAA, also integrated into my workspace, alerts me that my patient is already present and the operating room has been prepared. “Good morning, doctor,” my patient says to me. “Good morning. Are you ready for your appendectomy? It is a straightforward procedure, and our newest robotic programming, the one we are using on you today, has a 100% treatment rate.” She gives me a tight smile and nods, but I know she will be pleased with the fact that she will be in and out of the operating room in under 10 minutes and able to walk out of the hospital within the hour. I prep my patient for surgery, and she is wheeled into the room. A nurse puts my gloves on for me, which is really a redundant procedure since I will not be touching the patient, but in an emergency, it is possible, so it is better to be safe than sorry. During surgery, I still need to guide SEAA a couple of times, but overall, she performs flawlessly, and there is no need for any hands-on intervention from me. My only surgery of the day is done, so I think I will work from home analyzing abnormalities in the data sent from people’s home mirrors. I will easily be able to prescribe their medication for any ailments if needed.

SEAA summons the car, knowing it is my routine to leave right after my last surgery, and it’s waiting for me by the time I get to the door. Food for thought: How do you feel about putting your life in the hands of a robotic surgical program with the reassurance that a doctor is watching? Is it comforting or concerning that a robot is performing the surgery? Leave your thoughts in the comments! Don't forget to read part 3: Life Living in a Future Smart City: Evening Routine

0 Comments

Dr. Jacquelyn Schreck, Human Factors Engineer Dr. Jacquelyn Schreck, Human Factors Engineer We have long been in an era where the internet rules the world. All information is available at the touch of a finger on our smartphones, even our personal health information. Housing developments are up and coming in smart home communities and people are trying to make this happen on an even larger scale with smart cities. The problem with smart cities, though, is the tech-centric design that current leaders in smart cities are pushing. This of course will not suit human needs and factors in a way that is feasible for all users. Smart cities are bound to have a high level of technology, and not everyone is onboard for this. QIC has the ability to assess needs and expectations of citizens to help create better homes and cities. Here we present a case of a fictional future where our protagonist is living their life with the help of SEAA (Self-Efficient Automated Agent pronounced see-uh), an automated agent that has been integrated into the lives of humans in the future. It is a mostly optimistic view on what could easily become dystopian. Morning Routine I woke up to my bedroom lights being turned on and my curtains being pulled open. SEAA (Self-Efficient Automated Agent), built into my smart home and several other aspects of my life, tells me the date and that the weather is slightly cloudy. Reluctantly, I get out of bed, making sure I step on the sensor so SEAA knows I have gotten up. I walk to the kitchen to make sure SEAA is already making my breakfast. Today is Wednesday, which means breakfast is three eggs with two pieces of toast. It is a little redundant that I check breakfast is in the works since I know SEAA knows my breakfast schedule, but I like to check anyway. I sit at the kitchen island with my eggs and two pieces of toast, realize I am in the mood for coffee, and ask SEAA for some. “Coffee was not scheduled for this morning. Would you like me to make some coffee for you?” SEAA asks for confirmation. “Yes, please make some coffee.” After eating breakfast, I make my way to the bathroom and stand in the mirror that takes in my body composition. Any data collected from the mirror will be sent to my doctor and analyzed for any abnormalities. Just last week, my friend had an infection in her ear, and antibiotics arrived at her door (delivered via drone) before she left for work. Luckily, it appears that nothing has been immediately detected for me this morning (though I know a doctor will review it more thoroughly later), so I continue about my routine. I brush my teeth with a toothbrush that vibrates every 30 seconds to tell me it’s time to clean another part of my mouth. When I remove the toothbrush from my mouth, SEAA says, “you had coffee this morning, so you need to brush for an extra 30 seconds.” Slightly annoyed, I put my toothbrush back in my mouth and finish brushing my teeth. It is time to get dressed, so I make my way over to my walk-in closet. I could have SEAA pick out an outfit for me, but I enjoy choosing my clothes myself. Once I have landed on a red dress and a pair of burgundy flats, I feel I am ready to go, but my smart mirror thinks otherwise. “Your dress and shoes do not match perfectly. There are better options in your closet. Would you like a recommendation?” SEAA asks. I allow her to make the recommendation for me, but ultimately, I go with what I put on originally.

Now that I am dressed and ready to go to work, I make my way to the car, which is perfectly heated to 75 degrees on this chilly morning. Once inside and seated, I pull out my laptop and begin work since I do not even need to monitor the car driving itself. Quite a few years ago manual override was completely removed from cars, meaning humans don’t have the ability to take over even if they feel the need to. This made me uncomfortable at first, but since most cars on the road are also driverless, car accidents are extremely rare and usually involve an older model car that still allows human override. SEAA, who is also in my smart car, informs me that there is extra traffic this morning and that we might be a little late for work. I have a patient this morning and worry I will be late to the hospital. I look out the window, and I am surprised at what I see is causing the holdup… Food for thought: How do you feel about SEAA parenting the protagonist on brushing her teeth and matching her clothing? Is this helpful? Annoying? Would you like everything to be scheduled all the time, like the breakfast in this story, or would you want to make the choice in the morning when it is actually time to eat? Let us know your thoughts in the comments! Don't forget to read part 2 Life Living in a Future Smart City: Daytime  Dr. Eric Sikorski, Director of Programs & Research Dr. Eric Sikorski, Director of Programs & Research How many of you have witnessed it? A group of cyclists or runners taking over the trail, roadway, or sidewalk. The majestic herd in their natural habitat. Perhaps you have even been a part of such a herd. Once a week, I attend a local mountain bike group ride and was recently pondering the potential benefits in terms of learning and performance, beyond the social aspects. Some answers came after witnessing an interesting sequence of events a few weeks ago. Here's how it unfolded: A faction of approximately 15 riders took off quickly from the meeting point, speeding over jumps, negotiating turns, and crossing streams. After reaching the top of a long climb and taking a collective breather, one of the riders exclaimed "(name) will never want to ride with us again" implying that it's too difficult. That rider, appearing somewhat offended, retorted "what are you talking about? I ride 30 miles a week! I'm fine." That same rider started the next segment near the front of the pack, at a fast pace. The group can motivate us to work harder and test our limits more than if we were on our own. The reasons for this are a complex array of personality and social psychology factors. Wanting to look competent in front of your peers can be an extrinsic motivator. Not wanting to drop too far back from the pack is a survival instinct the far predates the invention of the wheel, let alone the mountain bike. Then, the rider who was previously forced to defend his honor fell... twice, within just a few minutes. The train of riders behind him had to wait as he untangled his bike from a fellow rider (the first time) and from some shrubbery (the second time). To the fallen rider's credit, he kept an awesome positive attitude, apologized to the group, dusted himself off, and kept moving down the trail. There is a potential danger in pushing past our limits when with a group. It can lead to repeated mistakes as we choose not to let up at risk of performing below the group's standards. We start trying too hard, we miscalculate, and that normally simple obstacle becomes a hazard. When we make a mistake we fear that everyone is judging and mocking us, which becomes a distraction. We get "in our head" and may not have, or want to take, the time to reset like we would if riding alone.

At the next break, a member of our group politely told the rider who fell that his seat may be too high, which could impair balance. Immediately, he adjusted his seat. From then on, I heard instruction being given and I observed adjustments being made, such as position on the bike and foot placement when negotiating challenging obstacles. The dynamic of those two riders became that of a mentor and mentee. The impromptu coaching from a group member was unexpected. It also risked offending, but the instruction was given in a humble and supportive way and received with grace and curiosity. Even with just some basic instruction and minor adjustments, the rider's improvement was noticeable. This sequence of error, observation, and instruction coupled with the riders' willing spirits led me to think about the group ride as more than just a motivator but also as a powerful learning tool. Incidentally, I also benefitted from the instruction and have to assume I was not the only one, beyond the intended recipient. So, when music and YouTube videos just aren't enough to up your performance, I suggest joining a local group ride. You never know what you might learn. Until then, keep collaborating and enjoy the ride!  Dr. Eric Sikorski, Director of Programs & Research Dr. Eric Sikorski, Director of Programs & Research Do you listen to music while exercising? Does music make you feel stronger, faster, and/or more focused? You’re not alone, as research suggests that music can improve performance in endurance, sprint, and resistance exercise. This is due to a combination of psychological and physiological effects such as more positive affect, lower subjective fatigue, and increased cardiac output and oxygen consumption. Given this evidence, I decided to listen to music during a recent ride. On that “musical ride”, I felt more comfortable on the bike, faster on the course, and more willing to take on difficult obstacles. Time also seemed to pass quickly, and the climbs were less of a slog. While I felt different on this ride, the objective data indicated my performance (e.g., average pace, maximum speed, elevation gained) was about the same as my previous, sans music, rides. Of course, this was only one session and there are several factors that were not controlled for such as course conditions, sleep, nutrition, hydration, and even exact tire pressure. Still, the subjective feeling of awesomeness contrasted with my actual mediocre performance is worth exploring. The feeling awesome part of my ride can likely be attributed to music’s ability to elevate mood and motivate, making those uphill sections less dreadful and increasing my will to tackle a challenging section. The music may have also served as a positive distraction from my tired legs. I was, at times, in a flow state, fully immersed with a focus on the activity and a reduced self-consciousness about effective execution. There are several explanations for why the music did not result in improved performance despite the elevated feelings. While music helped me focus and be less conscious of fatigue, my skills remained at a novice level. Difficult obstacles are were difficult and time-consuming, even if I was more willing to take them on with the help of artists such as Linkin Park. The tunes may have also diverted too much attention from the task, such as not allowing for mental rehearsal as I entered a technical trail section.

Interestingly, music preference can mediate exercise performance benefits. For the ride in question, I went with a curated “mountain biking” Spotify playlist where I enjoyed some of the songs but many were skips. This may have limited the sustained benefits of music on this ride. Incidentally, reaching for the skip button on my earbuds may have created an additional distraction, reducing speed and taking me out of my flow state. While the research points to psychological and physiological benefits of listening to music while exercising, do not expect that to translate immediately into objective performance gains. To that end, there is no substitute for proper instruction (see previous musing “Can YouTube Teach Me How to Ride?”) and deliberate practice to develop your competency. Music can be a great companion as you log those hours, and a motivator as you test your skills on increasingly challenging technical sections. So keep grooving and enjoy the ride! We're going to take next week off; Happy Independence Day! We'll follow up with the final post in the series on the value of Groups in training, the following week!  Dr. Eric Sikorski, Director of Programs & Research Dr. Eric Sikorski, Director of Programs & Research Have you used YouTube to learn a new skill? According to a 2018 Pew Research Center study, over 50% of U.S. YouTube users, which is approximately 35% of all U.S. adults, indicated “the site is very important when it comes to figuring out how to do things they haven’t done before.” I can just hear my Instructional Designer friends saying “yes, but videos on their own may not be enough.” After purchasing a shiny new mountain bike and waiting for it to be shipped, I started consuming YouTube videos centered on the fundamentals of mountain bike riding. There are numerous videos on the topic, and many are quite good, with clear explanations and demonstrations in real-time and slow motion. When my bike arrived, I felt confident and ready to tear up the trails with my newly acquired YouTube-based knowledge. Not so fast! In fact, my first ride was quite slow and objectively pretty bad. The video-based instruction not transferring to real-world performance of a complex psychomotor skill is not surprising. An obvious issue is that I did not perform the task immediately after video demonstration. For instance, I could have watched a video on a specific skill, such as cornering, at the top of the hill then attempt to execute while careening down. Still, time from demonstration to practice does not seem like the complete answer. I thought about M. David Merrill’s First Principles of Instruction, described below, and why videos are just part of a more comprehensive learning strategy. Task / Problem Centered: Learning must be in the context of a real-world problem. One limitation is that I did not have baseline data, so my approach to viewing online content was broad rather than focusing on a specific task or problem, such as cornering or braking techniques. Activation: New knowledge must be built on prior learning. YouTube video creators are appealing to a general audience without the luxury of a learner analysis. That leaves it to the viewer to reflect on their prior knowledge and see where the new learning fits. It would have been beneficial to think about what I know, such as riding a road bike, and how that may apply to what I saw in the videos. Demonstration: Videos can effectively provide learners with practical demonstrations of specific skills and proper techniques. The mountain bike training videos typically included a verbal explanation followed by an on-bike practical demonstration, often with narration. The challenge was not having my own experience as a point of reference, such as how demonstrated techniques “feel” on a bike. Application: Use the new knowledge in a meaningful way, through practical application. While riding, I identified knowledge and skill gaps based on indicators such as apprehension and mistakes then made mental notes that I wrote down after the ride. Integration: Continue to apply the new knowledge and build on it. This principle highlights the main flaw in my original thinking. It is unreasonable to expect to go from watching videos to proficiency. Similarly, practice alone is not enough. I consider Merrill’s First Principles a cyclical process of continually defining and refining the problem to be solved, building on prior knowledge, viewing demonstrations with an emphasis on targeted areas for improvement, and deliberate practice toward mastery. The next time you want to learn a new skill and are about to type some words into that YouTube search bar, take a moment to think about how the videos fit into a more comprehensive approach. With so much web content readily available, including engaging videos, it is easy to forget that demonstration is just part of the whole. In a world that expects immediate results, as I mistakenly did, it is also easy to forget that complex skills require extensive practice, so keep learning and enjoy the ride! In the next post we'll take a look at music and peformance! In military training environments, critical incidents such as injury, friendly fire, and non-lethal fratricide may occur. Quantitative performance data from training and exercises is often limited, requiring more in-depth case studies to identify and correct the underlying causes of critical incidents. The present study collected Army squad performance, firing, and communication data during a dry-fire battle drill as part of a larger research effort to measure, predict, and enhance Soldier and squad close combat performance. Soldier-worn sensors revealed that some quantitatively rated top-performing squads also committed friendly fire and a fratricide. Therefore, case studies were conducted to determine what contributed to these incidents. This presentation aims to provide insight into squad performance beyond quantitative ratings and to underscore the benefits of more in-depth analyses in the face of critical incidents during training. Squad communication data was particularly valuable in diagnosing incident root causes. For the fratricide incident specifically, the qualitative data revealed a communication breakdown between individual squad members stemming from a non-functioning radio. The specific events leading up to the fratricide incident, and the squad’s response, will be discussed along with squad communication patterns among high and low-performing squads in the context of various critical incidents. We will examine how the conditions surrounding critical incidents and the underlying causes of those incidents can be recreated and manipulated in a simulated training environment, allowing instructors to control the incident onset and provide timely feedback and instruction.

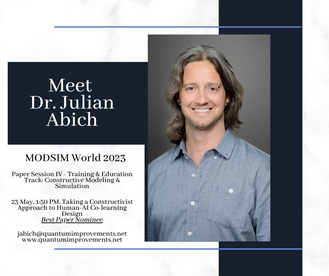

Artificial intelligence (AI) can facilitate personalized experiences shown to impact training outcomes. Trainees and instructors can benefit from AI-enabled adaptive learning, task support, assessment, and learning analytics. Impacting the learning and training benefits of AI are the instructional strategies implemented. The co-learning strategy is the process of learning how to learn with another entity. AI co-learning techniques can encourage social, active, and engaging learning behaviors consistent with constructivist learning theory. While the research on co-learning among humans is extensive, human-AI co-learning needs to be better understood. In a team context, co-learning is intended to support team members by facilitating knowledge sharing and awareness in accomplishing a shared goal. Co-learning can also be considered when humans and AI partner to accomplish related tasks with different end goals. This paper will discuss the design of a human-agent co-learning tool for the United States Air Force (USAF) through the lens of constructivism. It will delineate the contributing factors for effective human-AI co-learning interaction design. A USAF maintenance training use case provides a context for applying the factors. The use case will highlight the initiative of leveraging AI to help close an experience gap in maintenance personnel through more efficient, personalized, and engaging support.

Jennifer Solberg, Ph.D., Founder & CEO Jennifer Solberg, Ph.D., Founder & CEO I have two younger brothers, and once a week, the Solberg kids have a phone call. We each hold management positions in the technology world, so we swap notes about work a lot. We may be one of the few families with a running joke about Kubernetes. After our call the other day, my brother Mike sent me this article from the National Bureau of Economic Research, and I have been geeking out over it ever since. From what I can tell, it’s one of the first studies on how generative AI can improve an organization’s effectiveness – and not just in terms of its bottom line. Something I’ve been thinking about is how to train empathy. If you work in user experience, you understand how important empathy is to good design. Often, we end up working with solutions that suffer from “developer-centered design,” where features are built to check a box while minimizing the work for the development team. Also, we see “stakeholder-centered design,” where software development happens to impress someone with a pile of money. At the end of the day, if the people who need your solution can’t figure out how to use it, none of the rest of it matters. Empathy means putting yourself in someone else’s shoes. More importantly, it involves caring about other people, which seems hard to come by these days. Wouldn’t it be great if we could make something that teaches people how to do that? For a long time, I wondered whether virtual reality could show you someone else’s perspective, and I still think it could. This study shows there may be a different way. The study took place in a large software company’s customer support department. Working a help desk is a job where empathy is key to success. Not only do you have to be able to solve an irate and frustrated customer’s problem, you have to ensure they have a positive experience with you. In this research, customer support agents were given an AI chat assistant to help them diagnose problems but also engage with customers in an appropriate way. The assistant was built using the same large language model as the AI chatbot everyone loves to hate, ChatGPT. The assistant monitored the chats between customers and agents and provided agents real-time recommendations for how to respond, which agents could either take or ignore. As a result, overall productivity improved by almost 14% in terms of the number of issues resolved. Inexperienced agents rapidly learned to perform at the same level as more experienced ones. The assistant was trained on expert responses, so following its advice usually gave you the same answer an expert would give.

Here’s where it gets really interesting: a sentiment analysis of the chats showed that as a result of using the assistant, there was an immediate improvement in customer sentiment. The conversations novice agents were having were nicer. The assistant was trained to provide polite, empathetic recommendations, and over a short period of time, inexperienced agents adopted these behaviors in their own chats. Not only were they better at solving their customers’ problems, but the tone of the conversation was overall more positive. The agents learned very quickly how to be nice because the AI modeled that behavior for them. As a result, customers were happier, management needed to intervene less frequently, and employee attrition dropped. The irony of AI teaching people how to be better human beings is palpable. Are the agents that used the assistant more empathetic? We don’t know, but from a “fake it until you make it” perspective, it’s a good start. That aside, this study is an example of how this technology could help people with all sorts of communication issues function at a high level in emotionally demanding jobs. Maybe we should spend a little more time thinking about how it could help many people succeed where they previously couldn’t and focusing less on how it’s not particularly good at Googling things.  Julian Abich, Ph.D., Senior Human Factors Engineer Julian Abich, Ph.D., Senior Human Factors Engineer An estimated 602,000 new pilots, 610,000 maintenance technicians, and 899,000 cabin crew will be needed worldwide for commercial air travel over the next 20 years (Boeing, 2022). That’s about 30,000 pilots, 30,000 maintenance technicians, and 45,000 cabin crew trained annually. Additionally, urban air mobility is creating a new aviation industry that will require a different type of pilot and technician. It's clear there is a demand across the entire aviation industry to turn out new personnel at an increased rate. The demand should not just be met by numbers, but also by knowledge and experience. But how can you train faster, cheaper, and yet still maintain (and exceed) current high standards? Is the answer extended reality (XR) technology? (Hint: that's part of the solution). These are the problems being tackled by the aviation community and discussed at the World Aviation Training Summit (WATS). This year was the 25th anniversary of WATS. I've been attending and presenting at WATS for the past three years. In that time, I've seen the push for XR technology met with valid concerns for safety. If you know me, then you've heard me harp on the need for evidence-supported technology for training, so I can appreciate that stance. Technology developers have an ethical responsibility to conduct or commission the appropriate research before boasting claims of training effectiveness, efficiency, and satisfaction. There are many companies that came to the table with case studies showing the training value of their solution. Other companies want to do the same but may need more opportunities or research support. On the other side, some commercial airlines have been conducting XR studies internally, but the results tend to stay within the organization. The regulating authorities (e.g., FAA, ICAO, etc.) need the most convincing. They need to see the evidence and clearly understand where XR will be implemented during training. There may be an assumption that XR is the training solution but XR is just part of a well-designed, technology-enabled training strategy. It was great to hear many presenters convey this same message and reiterate the need for utilizing a suite of media suitable for developing or enhancing the necessary knowledge, skills, and abilities. We need collaboration between researchers, tech companies, and airlines, sharing research results to show the value of XR at all levels within the aviation industry. Otherwise, the work will continue to be done in silos, efforts will be duplicated, progress will be stunted, and personnel demands will fail to be met. We all need to contribute to the scientific body of knowledge if we want to move the industry in the direction needed to adapt and prosper.

Reference: Boeing. (2022, July 25). Boeing forecasts demand for 2.1 million new commercial aviation personnel and enhanced training. https://services.boeing.com/news/2022-boeing-pilot-technician-outlook Extended reality (XR) technologies have been utilized as effective training tools across many contexts, including military aviation, although commercial aviation has been slower to adopt these technologies. While there is hype behind every new technology, XR technologies have evolved past the emerging classification stage and are at a state of maturity where their impact on training is supported by empirical evidence. Diffusion of innovation theory (Rogers, 1962) presents key factors that, when met, increase the likelihood of adoption. These factors consider the relative advantage, trialability, observability, compatibility, and complexity of the XR technology. Further, there are strategic approaches that should be implemented to address each of these innovation diffusion factors. This presentation will discuss each diffusion factor, provide exemplar use cases, and outline evidence-backed considerations to improve the probability of XR adoption for training. Considerations will discuss various effects that may occur with the introduction XR technology, such as the novelty effect where improved performance initially improves due to new technology and not because of learning. Key questions will be presented that should be addressed under each diffusion factor that will help guide the information needed to support the argument for XR adoption. Importantly, the quality of research evidence to support XR implementation and adoption is critical to reducing the risk of ineffective training. Therefore, a discussion of research-related considerations will also be presented to ensure an appropriate interpretation of existing XR research literature. The goal is to provide the audience with an objective lens to help them determine whether XR technologies should be adopted for their training needs.  World Aviation Training Summit April 18-20, 2023, Orlando, FL April 20, 11:15 AM, Improving the Probability of XR Adoption for Training |

AuthorsThese posts are written or shared by QIC team members. We find this stuff interesting, exciting, and totally awesome! We hope you do too! Categories

All

Archives

June 2024

|

RSS Feed

RSS Feed